Welcome to H2O 3.0

- New Users

- Experienced Users

- Enterprise Users

- Sparkling Water Users

- Python Users

- R Users

- API Users

- Java Users

- Developers

Welcome to the H2O documentation site! Depending on your area of interest, select a learning path from the links above.

We’re glad you’re interested in learning more about H2O - if you have any questions, please email them to support@h2o.ai or post them on our Google groups website, h2ostream.

Note: To join our Google group on h2ostream, you need a Google account (such as Gmail or Google+). On the h2ostream page, click the Join group button, then click the New Topic button to post a new message. You don’t need to request or leave a message to join - you should be added to the group automatically.

We welcome your feedback! Please let us know if you have any questions or comments about this site by emailing us at support@h2o.ai.

New Users

If you’re just getting started with H2O, here are some links to help you learn more:

Downloads page: First things first - download a copy of H2O here by selecting a build under “Download H2O” (the “Bleeding Edge” build contains the latest changes, while the latest alpha release represents a more stable build), then use the installation instruction tabs to install H2O on your client of choice (standalone, R, Python, Hadoop, or Maven) .

For first-time users, we recommend downloading the latest alpha release and the default standalone option (the first tab) as the installation method. Make sure to install Java if it is not already installed.

The following video provides step-by-step instructions on how to install and run H2O:

Tutorials: To see a step-by-step example of our algorithms in action, select a model type from the following list:

Getting Started with Flow: This document describes our new intuitive web interface, Flow. This interface is similar to IPython notebooks, and allows you to create a visual workflow to share with others.

Launch from the command line: This document describes some of the additional options that you can configure when launching H2O (for example, to specify a different directory for saved Flow data, allocate more memory, or use a flatfile for quick configuration of a cluster).

Algorithms: This document describes the science behind our algorithms and provides a detailed, per-algo view of each model type.

Experienced Users

If you’ve used previous versions of H2O, the following links will help guide you through the process of upgrading to H2O 3.0.

Migration Guide: This document provides a comprehensive guide to assist users in upgrading to H2O 3.0. It gives an overview of the changes to the algorithms and the web UI introduced in this version and describes the benefits of upgrading for users of R, APIs, and Java.

Porting R Scripts: This document is designed to assist users who have created R scripts using previous versions of H2O. Due to the many improvements in R, scripts created using previous versions of H2O need some revision to work with H2O 3.0. This document provides a side-by-side comparison of the changes in R for each algorithm, as well as overall structural enhancements R users should be aware of, and provides a link to a tool that assists users in upgrading their scripts.

Recent Changes: This document describes the most recent changes in the latest build of H2O. It lists new features, enhancements (including changed parameter default values), and bug fixes for each release, organized by sub-categories such as Python, R, and Web UI.

H2O Classic vs H2O 3.0: This document presents a side-by-side comparison of H2O 3.0 and the previous version of H2O. It compares and contrasts the features, capabilities, and supported algorithms between the versions. If you’d like to learn more about the benefits of upgrading, this is a great source of information.

Algorithms Roadmap: This document outlines our currently implemented features and describes which features are planned for future software versions. If you’d like to know what’s up next for H2O, this is the place to go.

Contributing code: If you’re interested in contributing code to H2O, we appreciate your assistance! This document describes how to access our list of Jiras that are suggested tasks for contributors and how to contact us.

Enterprise Users

If you’re considering using H2O in an enterprise environment, you’ll be happy to know that H2O supports many popular scalable computing solutions, such as Hadoop and EC2 (AWS). For more information, refer to the following links.

The following video provides step-by-step instructions on how to start H2O on Hadoop:

How to Pass S3 Credentials to H2O: This document describes the necessary step of passing your S3 credentials to H2O so that H2O can be used with AWS, as well as how to run H2O on an EC2 cluster.

Running H2O on Hadoop: This document describes how to run H2O on Hadoop.

Sparkling Water Users

Sparkling Water is a gradle project with the following submodules:

- Core: Implementation of H2OContext, H2ORDD, and all technical integration code

- Examples: Application, demos, examples

- ML: Implementation of MLLib pipelines for H2O algorithms

- Assembly: Creates “fatJar” composed of all other modules

The best way to get started is to modify the core module or create a new module, which extends a project.

Users of our Spark-compatible solution, Sparkling Water, should be aware that Sparkling Water is only supported with the latest version of H2O. For more information about Sparkling Water, refer to the following links.

Getting Started with Sparkling Water

The following video provides step-by-step instructions on how to start H2O using Sparkling Water:

Download Sparkling Water: Go here to download Sparkling Water.

Sparkling Water Development Documentation: Read this document first to get started with Sparkling Water.

Launch on Hadoop and Import from HDFS: Go here to learn how to start Sparkling Water on Hadoop.

Sparkling Water Tutorials: Go here for demos and examples.

Sparkling Water K-means Tutorial: Go here to view a demo that uses Scala to create a K-means model.

Sparkling Water GBM Tutorial: Go here to view a demo that uses Scala to create a GBM model.

Sparkling Water on YARN: Follow these instructions to run Sparkling Water on a YARN cluster.

Building Applications on top of H2O: This short tutorial describes project building and demonstrates the capabilities of Sparkling Water using Spark Shell to build a Deep Learning model.

Sparkling Water FAQ: This FAQ provides answers to many common questions about Sparkling Water.

Sparkling Water Blog Posts

Sparkling Water Meetup Slide Decks

Python Users

Pythonistas will be glad to know that H2O now provides support for this popular programming language. Python users can also use H2O with IPython notebooks. For more information, refer to the following links.

The following video provides step-by-step instructions on how to start H2O using Python:

Python readme: This document describes how to setup and install the prerequisites for using Python with H2O.

Python docs: This document represents the definitive guide to using Python with H2O.

R Users

Don’t worry, R users - we still provide R support in the latest version of H2O, just as before. The R components of H2O have been cleaned up, simplified, and standardized, so the command format is easier and more intuitive. Due to these improvements, be aware that any scripts created with previous versions of H2O will need some revision to be compatible with the latest version.

We have provided the following helpful resources to assist R users in upgrading to the latest version, including a document that outlines the differences between versions and a tool that reviews scripts for deprecated or renamed parameters.

The following video provides step-by-step instructions on how to start H2O in R:

R User Documentation: This document contains all commands in the H2O package for R, including examples and arguments. It represents the definitive guide to using H2O in R.

Porting R Scripts: This document is designed to assist users who have created R scripts using previous versions of H2O. Due to the many improvements in R, scripts created using previous versions of H2O will not work. This document provides a side-by-side comparison of the changes in R for each algorithm, as well as overall structural enhancements R users should be aware of, and provides a link to a tool that assists users in upgrading their scripts.

API Users

API users will be happy to know that the APIs have been more thoroughly documented in the latest release of H2O and additional capabilities (such as exporting weights and biases for Deep Learning models) have been added.

REST APIs are generated immediately out of the code, allowing users to implement machine learning in many ways. For example, REST APIs could be used to call a model created by sensor data and to set up auto-alerts if the sensor data falls below a specified threshold.

REST API Reference: This document represents the definitive guide to the H2O REST API.

REST API Schema Reference: This document represents the definitive guide to the H2O REST API schemas.

Java Users

For Java developers, the following resources will help you create your own custom app that uses H2O.

H2O Core Java Developer Documentation: The definitive Java API guide for the core components of H2O.

H2O Algos Java Developer Documentation: The definitive Java API guide for the algorithms used by H2O.

Developers

If you’re looking to use H2O to help you develop your own apps, the following links will provide helpful references.

For IDEA IntelliJ support, run gradle idea, then Import Project within IDEA and point it to the h2o-3 directory.

For JUnit tests to pass, you may need multiple H2O nodes. Create a “Run/Debug” configuration with the following parameters:

Type: Application

Main class: H2OApp

Use class path of module: h2o-app

After starting multiple “worker” node processes in addition to the JUnit test process, they will cloud up and run the multi-node JUnit tests.

Maven install: This page provides information on how to build a version of H2O that generates the correct IDE files.

apps.h2o.ai: Apps.h2o.ai is designed to support application developers via events, networking opportunities, and a new, dedicated website comprising developer kits and technical specs, news, and product spotlights.

H2O Project Templates: This page provides template info for projects created in Java, Scala, or Sparkling Water.

H2O Scala API Developer Documentation: The definitive Scala API guide for H2O.

Contributing code: If you’re interested in contributing code to H2O, we appreciate your assistance! This document describes how to access our list of Jiras that contributors can work on and how to contact us.

Downloading H2O

To download H2O, go to our downloads page. Select a build type (bleeding edge or latest alpha), then select an installation method (standalone, R, Python, Hadoop, or Maven) by clicking the tabs at the top of the page. Follow the instructions in the tab to install H2O.

Starting H2O …

There are a variety of ways to start H2O, depending on which client you would like to use.

… From R

To use H2O in R, follow the instructions on the download page.

… From Python

To use H2O in Python, follow the instructions on the download page.

… On Spark

To use H2O on Spark, follow the instructions on the Sparkling Water download page.

… From the Cmd Line

You can use Terminal (OS X) or the Command Prompt (Windows) to launch H2O 3.0. When you launch from the command line, you can include additional instructions to H2O 3.0, such as how many nodes to launch, how much memory to allocate for each node, assign names to the nodes in the cloud, and more.

There are two different argument types:

- JVM arguments

- H2O arguments

The arguments use the following format: java <JVM Options> -jar h2o.jar <H2O Options>.

JVM Options

-version: Display Java version info.-Xmx<Heap Size>: To set the total heap size for an H2O node, configure the memory allocation option-Xmx. By default, this option is set to 1 Gb (-Xmx1g). When launching nodes, we recommend allocating a total of four times the memory of your data.

Note: Do not try to launch H2O with more memory than you have available.

H2O Options

hor-help: Display this information in the command line output.-name <H2OCloudName>: Assign a name to the H2O instance in the cloud (where<H2OCloudName>is the name of the cloud. Nodes with the same cloud name will form an H2O cloud (also known as an H2O cluster).-flatfile <FileName>: Specify a flatfile of IP address for faster cloud formation (where<FileName>is the name of the flatfile.-ip <IPnodeAddress>: Specify an IP address other than the defaultlocalhostfor the node to use (where<IPnodeAddress>is the IP address).-port <#>: Specify a port number other than the default54321for the node to use (where<#>is the port number).-network <IPv4NetworkSpecification1>[,<IPv4NetworkSpecification2> ...]: Specify a range (where applicable) of IP addresses (where<IPv4NetworkSpecification1>represents the first interface,<IPv4NetworkSpecification2>represents the second, and so on). The IP address discovery code binds to the first interface that matches one of the networks in the comma-separated list. For example,10.1.2.0/24supports 256 possibilities.-ice_root <fileSystemPath>: Specify a directory for H2O to spill temporary data to disk (where<fileSystemPath>is the file path).-flow_dir <server-side or HDFS directory>: Specify a directory for saved flows. The default is/Users/h2o-<H2OUserName>/h2oflows(where<H2OUserName>is your user name).nthreads <#ofThreads>: Specify the maximum number of threads in the low-priority batch work queue (where<#ofThreads>is the number of threads). The default is 99.-client: Launch H2O node in client mode. This is used mostly for running Sparkling Water.

Cloud Formation Behavior

New H2O nodes join to form a cloud during launch. After a job has started on the cloud, it prevents new members from joining.

To start an H2O node with 4GB of memory and a default cloud name:

java -Xmx4g -jar h2o.jarTo start an H2O node with 6GB of memory and a specific cloud name:

java -Xmx6g -jar h2o.jar -name MyCloudTo start an H2O cloud with three 2GB nodes using the default cloud names:

java -Xmx2g -jar h2o.jar &java -Xmx2g -jar h2o.jar &java -Xmx2g -jar h2o.jar &

Wait for the INFO: Registered: # schemas in: #mS output before entering the above command again to add another node (the number for # will vary).

Flatfile Configuration

If you are configuring many nodes, it is faster and easier to use the -flatfile option, rather than -ip and -port.

To configure H2O on a multi-node cluster:

- Locate a set of hosts.

- Download the appropriate version of H2O for your environment.

- Verify that the same h2o.jar file is available on all hosts.

Create a flatfile (a plain text file with the IP and port numbers of the hosts). Use one entry per line. For example:

192.168.1.163:54321 192.168.1.164:54321- Copy the flatfile.txt to each node in the cluster.

Use the

-Xmxoption to specify the amount of memory for each node. The cluster’s memory capacity is the sum of all H2O nodes in the cluster.For example, if you create a cluster with four 20g nodes (by specifying

-Xmx20gfour times), H2O will have a total of 80 gigs of memory available.For best performance, we recommend sizing your cluster to be about four times the size of your data. To avoid swapping, the

-Xmxallocation must not exceed the physical memory on any node. Allocating the same amount of memory for all nodes is strongly recommended, as H2O works best with symmetric nodes.Note the optional

-ipand-portoptions specify the IP address and ports to use. The-ipoption is especially helpful for hosts with multiple network interfaces.java -Xmx20g -jar h2o.jar -flatfile flatfile.txt -port 54321The output will resemble the following:

04-20 16:14:00.253 192.168.1.70:54321 2754 main INFO: 1. Open a terminal and run 'ssh -L 55555:localhost:54321 H2O-3User@###.###.#.##' 04-20 16:14:00.253 192.168.1.70:54321 2754 main INFO: 2. Point your browser to http://localhost:55555 04-20 16:14:00.437 192.168.1.70:54321 2754 main INFO: Log dir: '/tmp/h2o-H2O-3User/h2ologs' 04-20 16:14:00.437 192.168.1.70:54321 2754 main INFO: Cur dir: '/Users/H2O-3User/h2o-3' 04-20 16:14:00.459 192.168.1.70:54321 2754 main INFO: HDFS subsystem successfully initialized 04-20 16:14:00.460 192.168.1.70:54321 2754 main INFO: S3 subsystem successfully initialized 04-20 16:14:00.460 192.168.1.70:54321 2754 main INFO: Flow dir: '/Users/H2O-3User/h2oflows' 04-20 16:14:00.475 192.168.1.70:54321 2754 main INFO: Cloud of size 1 formed [/192.168.1.70:54321]As you add more nodes to your cluster, the output is updated:

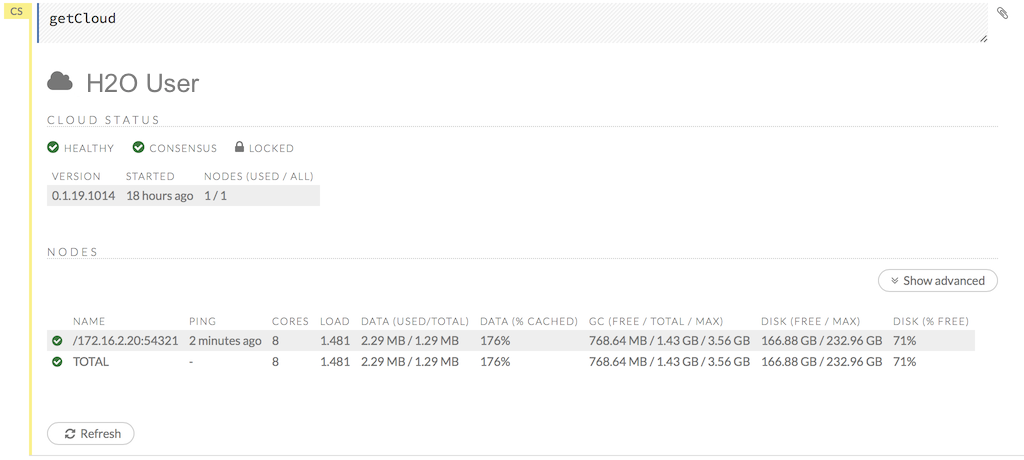

INFO WATER: Cloud of size 2 formed [/...]...Access the H2O 3.0 web UI (Flow) with your browser. Point your browser to the HTTP address specified in the output

Listening for HTTP and REST traffic on ....To check if the cloud is available, point to the url

http://<ip>:<port>/Cloud.json(an example of the JSON response is provided below). Wait forcloud_sizeto be the expected value and theconsensusfield to be true:{ ... "cloud_size": 2, "consensus": true, ... }

Manual Multi-node

Running H2O on a multi-node cluster allows you to use more memory for large-scale tasks (for example, creating models from huge datasets) than would be possible on a single node.

Locate a set of hosts that will be used to create your cluster. A host can be a server, an EC2 instance, or your laptop.

Download H2O, including the .jar file, by going to the H2O downloads page and choosing the appropriate version for your environment.

Verify the same h2o.jar file is available on each host in the multi-node cluster.

Create a flatfile.txt that contains an IP address and port number for each H2O instance. Use one entry per line. For example:

192.168.1.163:54321 192.168.1.164:54321A flat file listing the nodes is the easiest way to get multiple H2O nodes to find each other and form a cluster. Note that the

-flatfileoption tells one H2O node where to find the others. It is not a substitute for the-ipand-portspecification.Copy the flatfile.txt to each node in your cluster.

Use the

-Xmxoption in the Java command line to specify the amount of memory allocated to each H2O node. The cluster’s memory capacity is the sum of the memory available across all H2O nodes in the cluster.For example, if you create a cluster with four 20g nodes (by specifying

-Xmx20g), H2O will have a total of 80 gigs of memory available.For best performance, we recommend creating a cluster about four times the size of your data. However, to avoid memory swapping, the Xmx value must not be larger than the physical memory on any given node. We strongly recommend allocating the same amount of memory for all nodes, since H2O works best with symmetric nodes.

The optional

-ip(not shown in the example below) and-portoptions tell this H2O node what IP address and ports (port and port+1 are used) to use. The-ipoption is especially helpful for hosts that have multiple network interfaces.$ java -Xmx20g -jar h2o.jar -flatfile flatfile.txt -port 54321You will see output similar to the following:

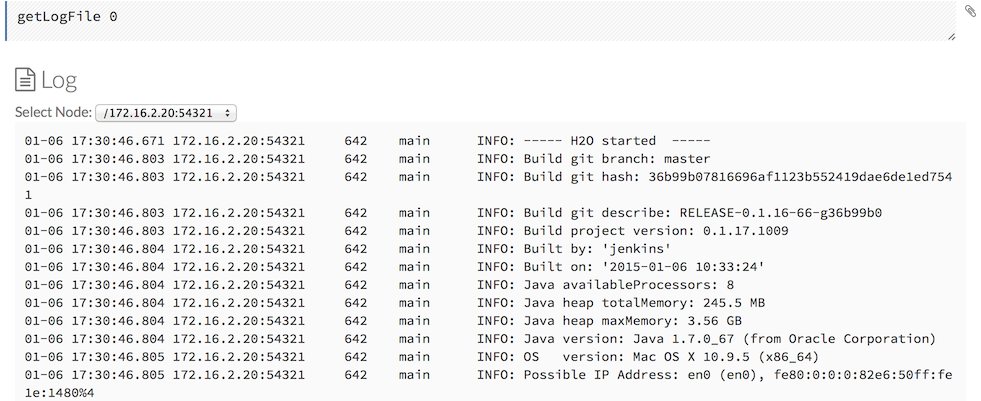

05-11 16:40:46.268 172.16.2.39:54322 34242 main INFO: ----- H2O started ----- 05-11 16:40:46.337 172.16.2.39:54322 34242 main INFO: Build git branch: master 05-11 16:40:46.337 172.16.2.39:54322 34242 main INFO: Build git hash: 6c96387f893f3454912e20638dcb2f23a2786723 05-11 16:40:46.337 172.16.2.39:54322 34242 main INFO: Build git describe: jenkins-master-1192-10-g6c96387-dirty 05-11 16:40:46.337 172.16.2.39:54322 34242 main INFO: Build project version: 0.3.0.99999 05-11 16:40:46.337 172.16.2.39:54322 34242 main INFO: Built by: 'H2OUser' 05-11 16:40:46.337 172.16.2.39:54322 34242 main INFO: Built on: '2015-05-08 11:19:26' 05-11 16:40:46.337 172.16.2.39:54322 34242 main INFO: Java availableProcessors: 8 05-11 16:40:46.338 172.16.2.39:54322 34242 main INFO: Java heap totalMemory: 245.5 MB 05-11 16:40:46.338 172.16.2.39:54322 34242 main INFO: Java heap maxMemory: 17.78 GB 05-11 16:40:46.338 172.16.2.39:54322 34242 main INFO: Java version: Java 1.7.0_67 (from Oracle Corporation) 05-11 16:40:46.338 172.16.2.39:54322 34242 main INFO: OS version: Mac OS X 10.10.3 (x86_64) 05-11 16:40:46.338 172.16.2.39:54322 34242 main INFO: Machine physical memory: 16.00 GB 05-11 16:40:46.339 172.16.2.39:54322 34242 main INFO: X-h2o-cluster-id: 1431387646125 05-11 16:40:46.339 172.16.2.39:54322 34242 main INFO: Opted out of sending usage metrics. 05-11 16:40:46.339 172.16.2.39:54322 34242 main INFO: Possible IP Address: en5 (en5), fe80:0:0:0:daeb:97ff:feb3:6d4b%4 05-11 16:40:46.339 172.16.2.39:54322 34242 main INFO: Possible IP Address: en5 (en5), 172.16.2.39 05-11 16:40:46.339 172.16.2.39:54322 34242 main INFO: Possible IP Address: lo0 (lo0), fe80:0:0:0:0:0:0:1%1 05-11 16:40:46.339 172.16.2.39:54322 34242 main INFO: Possible IP Address: lo0 (lo0), 0:0:0:0:0:0:0:1 05-11 16:40:46.339 172.16.2.39:54322 34242 main INFO: Possible IP Address: lo0 (lo0), 127.0.0.1 05-11 16:40:46.340 172.16.2.39:54322 34242 main INFO: Internal communication uses port: 54323 05-11 16:40:46.340 172.16.2.39:54322 34242 main INFO: Listening for HTTP and REST traffic on http://172.16.2.39:54322/ 05-11 16:40:46.342 172.16.2.39:54322 34242 main INFO: H2O cloud name: 'H2OUser' on /172.16.2.39:54322, static configuration based on -flatfile flatfile.txt 05-11 16:40:46.342 172.16.2.39:54322 34242 main INFO: If you have trouble connecting, try SSH tunneling from your local machine (e.g., via port 55555): 05-11 16:40:46.342 172.16.2.39:54322 34242 main INFO: 1. Open a terminal and run 'ssh -L 55555:localhost:54322 H2OUser@172.16.2.39' 05-11 16:40:46.342 172.16.2.39:54322 34242 main INFO: 2. Point your browser to http://localhost:55555 05-11 16:40:46.542 172.16.2.39:54322 34242 main INFO: Log dir: '/tmp/h2o-H2OUser/h2ologs' 05-11 16:40:46.543 172.16.2.39:54322 34242 main INFO: Cur dir: '/Users/H2OUser/h2o-3' 05-11 16:40:46.564 172.16.2.39:54322 34242 main INFO: HDFS subsystem successfully initialized 05-11 16:40:46.565 172.16.2.39:54322 34242 main INFO: S3 subsystem successfully initialized 05-11 16:40:46.565 172.16.2.39:54322 34242 main INFO: Flow dir: '/Users/H2OUser/h2oflows' 05-11 16:40:46.578 172.16.2.39:54322 34242 main INFO: Cloud of size 3 formed [/172.16.2.39:54322, 172.16.2.40:54322, 172.16.2.41:54322]As you add more nodes to your cluster, the H2O output will inform you:

INFO: Cloud of size 3 formed [/...]Access the H2O Web UI with your browser. Point your browser to the IP address listed under “Listening for HTTP and REST traffic on…” in the H2O output.

If you are programmatically creating the cloud, give the cloud some time to establish itself (typically one minute is sufficient) and then check to see if the cloud is up.

To check the cloud’s status, point to the url http://

: /Cloud.json (see a piece of the JSON response below). Wait for cloud_sizeto be the expected value and theconsensusfield to be true.

{

...

"cloud_size": 2,

"consensus": true,

...

}

… On EC2 and S3

Note: If you would like to try out H2O on an EC2 cluster, play.h2o.ai is the easiest way to get started. H2O Play provides access to a temporary cluster managed by H2O.

If you would still like to set up your own EC2 cluster, follow the instructions below.

On EC2

Tested on Redhat AMI, Amazon Linux AMI, and Ubuntu AMI

To use the Amazon Web Services (AWS) S3 storage solution, you will need to pass your S3 access credentials to H2O. This will allow you to access your data on S3 when importing data frames with path prefixes s3n://....

For security reasons, we recommend writing a script to read the access credentials that are stored in a separate file. This will not only keep your credentials from propagating to other locations, but it will also make it easier to change the credential information later.

Standalone Instance

When running H2O in standalone mode using the simple Java launch command, we can pass in the S3 credentials in two ways.

You can pass in credentials in standalone mode the same way as accessing data from HDFS on Hadoop. Create a

core-site.xmlfile and pass it in with the flag-hdfs_config. For an examplecore-site.xmlfile, refer to Core-site.xml.Edit the properties in the core-site.xml file to include your Access Key ID and Access Key as shown in the following example:

<property> <name>fs.s3n.awsAccessKeyId</name> <value>[AWS SECRET KEY]</value> </property> <property> <name>fs.s3n.awsSecretAccessKey</name> <value>[AWS SECRET ACCESS KEY]</value> </property>Launch with the configuration file

core-site.xmlby entering the following in the command line:java -jar h2o.jar -hdfs_config core-site.xml

Import the data using importFile with the S3 url path:

s3n://bucket/path/to/file.csv

You can pass the AWS Access Key and Secret Access Key in an S3N Url in Flow, R, or Python (where

AWS_ACCESS_KEYrepresents your user name andAWS_SECRET_KEYrepresents your password).To import the data from the Flow API:

`importFiles [ "s3n://<AWS_ACCESS_KEY>:<AWS_SECRET_KEY>@bucket/path/to/file.csv" ]`To import the data from the R API:

`h2o.importFile(path = "s3n://<AWS_ACCESS_KEY>:<AWS_SECRET_KEY>@bucket/path/to/file.csv")`To import the data from the Python API:

`h2o.import_frame(path = "s3n://<AWS_ACCESS_KEY>:<AWS_SECRET_KEY>@bucket/path/to/file.csv")`

Core-site.xml Example

The following is an example core-site.xml file:

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!-- Put site-specific property overrides in this file. -->

<configuration>

<!--

<property>

<name>fs.default.name</name>

<value>s3n://<your s3 bucket></value>

</property>

-->

<property>

<name>fs.s3n.awsAccessKeyId</name>

<value>insert access key here</value>

</property>

<property>

<name>fs.s3n.awsSecretAccessKey</name>

<value>insert secret key here</value>

</property>

</configuration>

Launching H2O

- Selecting the Operating System and Virtualization Type

- Configuring the Instance

- Downloading Java and H2O

Note: Before launching H2O on an EC2 cluster, verify that ports 54321 and 54322 are both accessible by TCP and UDP.

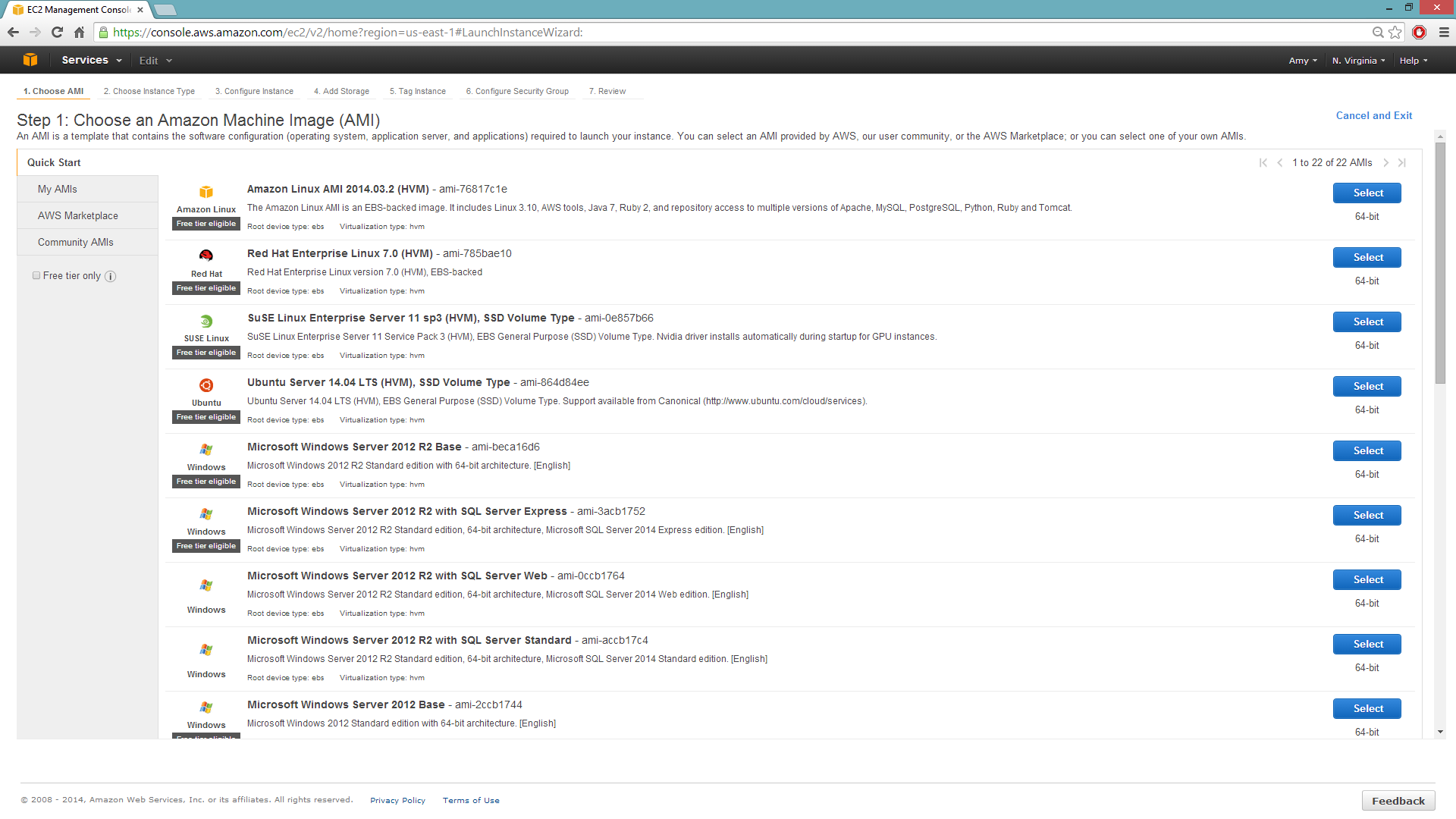

Selecting the Operating System and Virtualization Type

Select your operating system and the virtualization type of the prebuilt AMI on Amazon. If you are using Windows, you will need to use a hardware-assisted virtual machine (HVM). If you are using Linux, you can choose between para-virtualization (PV) and HVM. These selections determine the type of instances you can launch.

For more information about virtualization types, refer to Amazon.

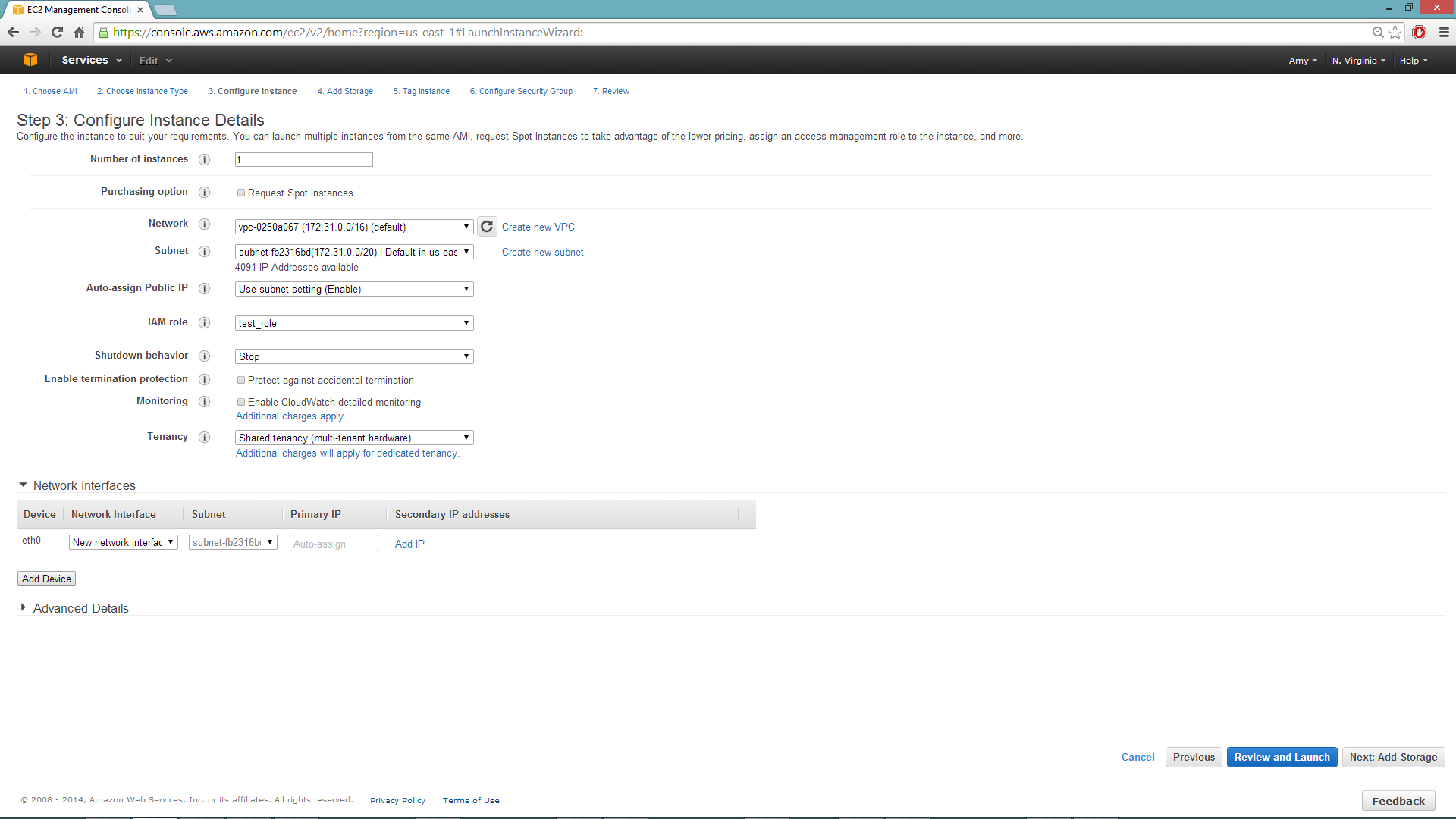

Configuring the Instance

Select the IAM role and policy to use to launch the instance. H2O detects the temporary access keys associated with the instance, so you don’t need to copy your AWS credentials to the instances.

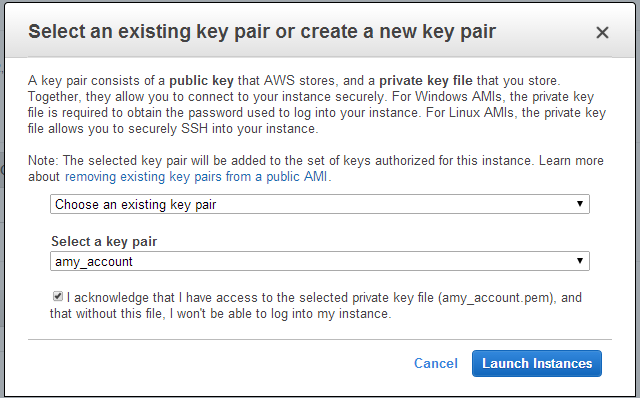

When launching the instance, select an accessible key pair.

(Windows Users) Tunneling into the Instance

For Windows users that do not have the ability to use ssh from the terminal, either download Cygwin or a Git Bash that has the capability to run ssh:

ssh -i amy_account.pem ec2-user@54.165.25.98

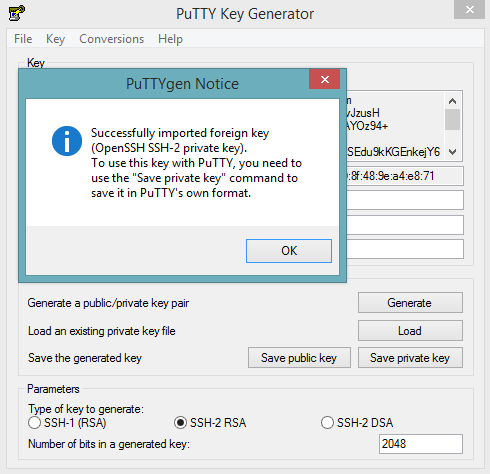

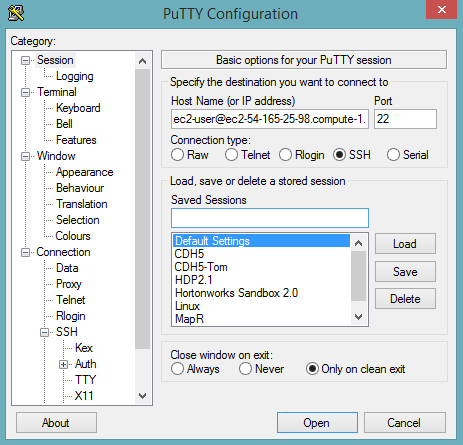

Otherwise, download PuTTY and follow these instructions:

- Launch the PuTTY Key Generator.

- Load your downloaded AWS pem key file. Note: To see the file, change the browser file type to “All”.

Save the private key as a .ppk file.

Launch the PuTTY client.

In the Session section, enter the host name or IP address. For Ubuntu users, the default host name is

ubuntu@<ip-address>. For Linux users, the default host name isec2-user@<ip-address>.

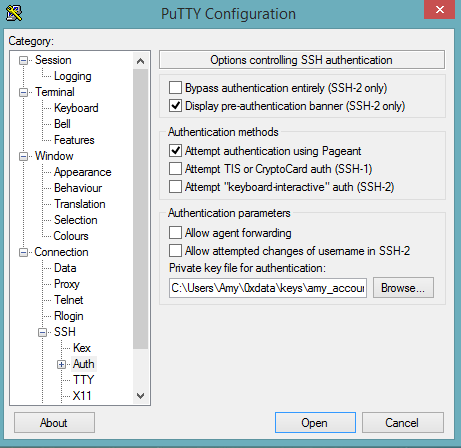

Select SSH, then Auth in the sidebar, and click the Browse button to select the private key file for authentication.

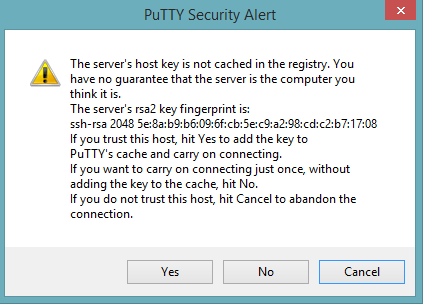

Start a new session and click the Yes button to confirm caching of the server’s rsa2 key fingerprint and continue connecting.

Downloading Java and H2O

- Download Java (JDK 1.7 or later) if it is not already available on the instance.

To download H2O, run the

wgetcommand with the link to the zip file available on our website by copying the link associated with the Download button for the selected H2O build.wget http://h2o-release.s3.amazonaws.com/h2o/rel-shannon/1/index.html unzip h2o-0.2.1.1.zip cd h2o-0.2.1.1 java -Xmx4g -jar h2o.jar- From your browser, navigate to

<Private_IP_Address>:54321or<Public_DNS>:54321to use H2O’s web interface.

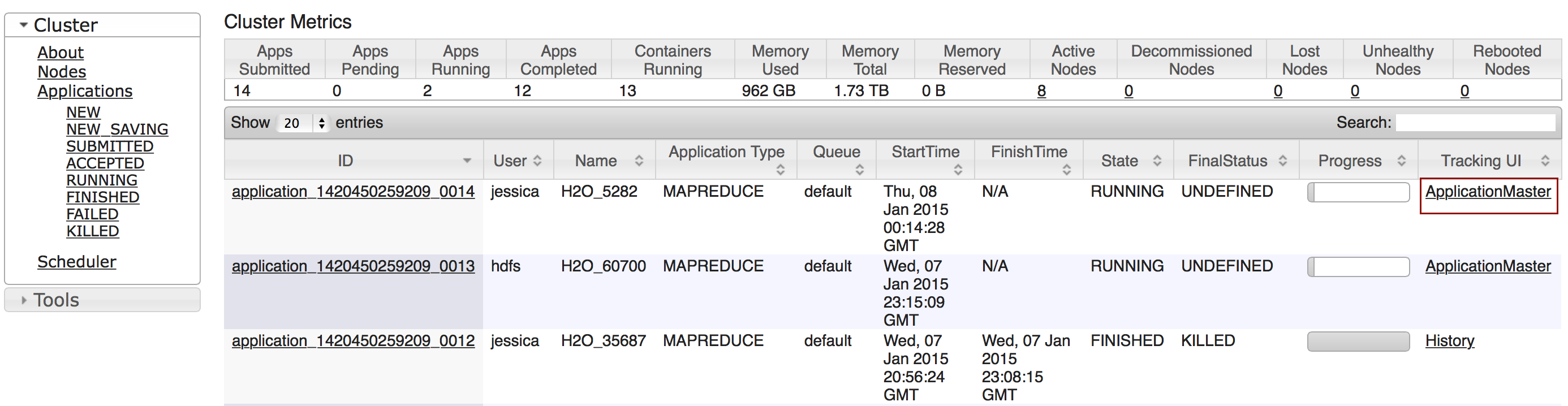

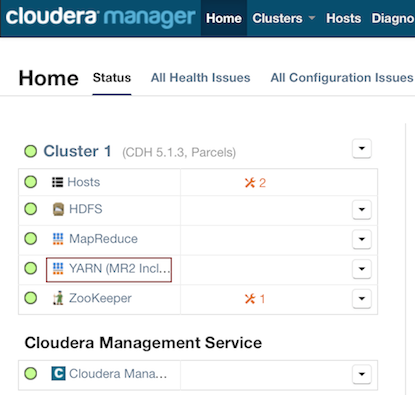

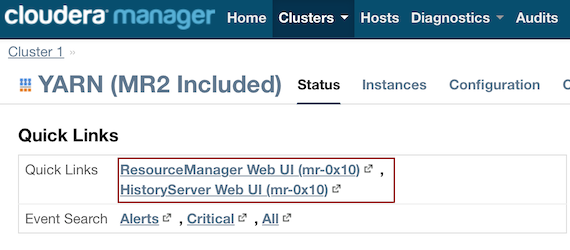

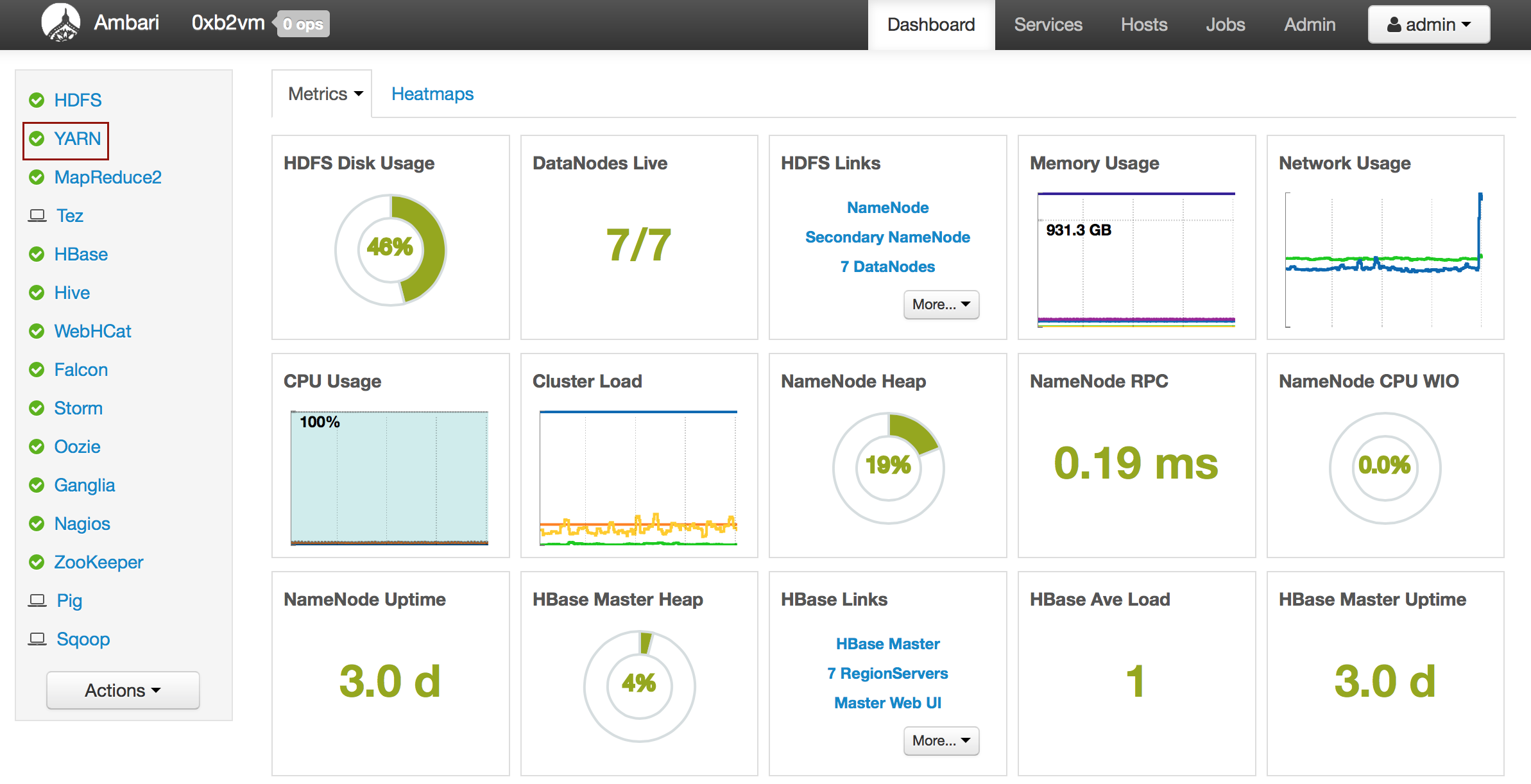

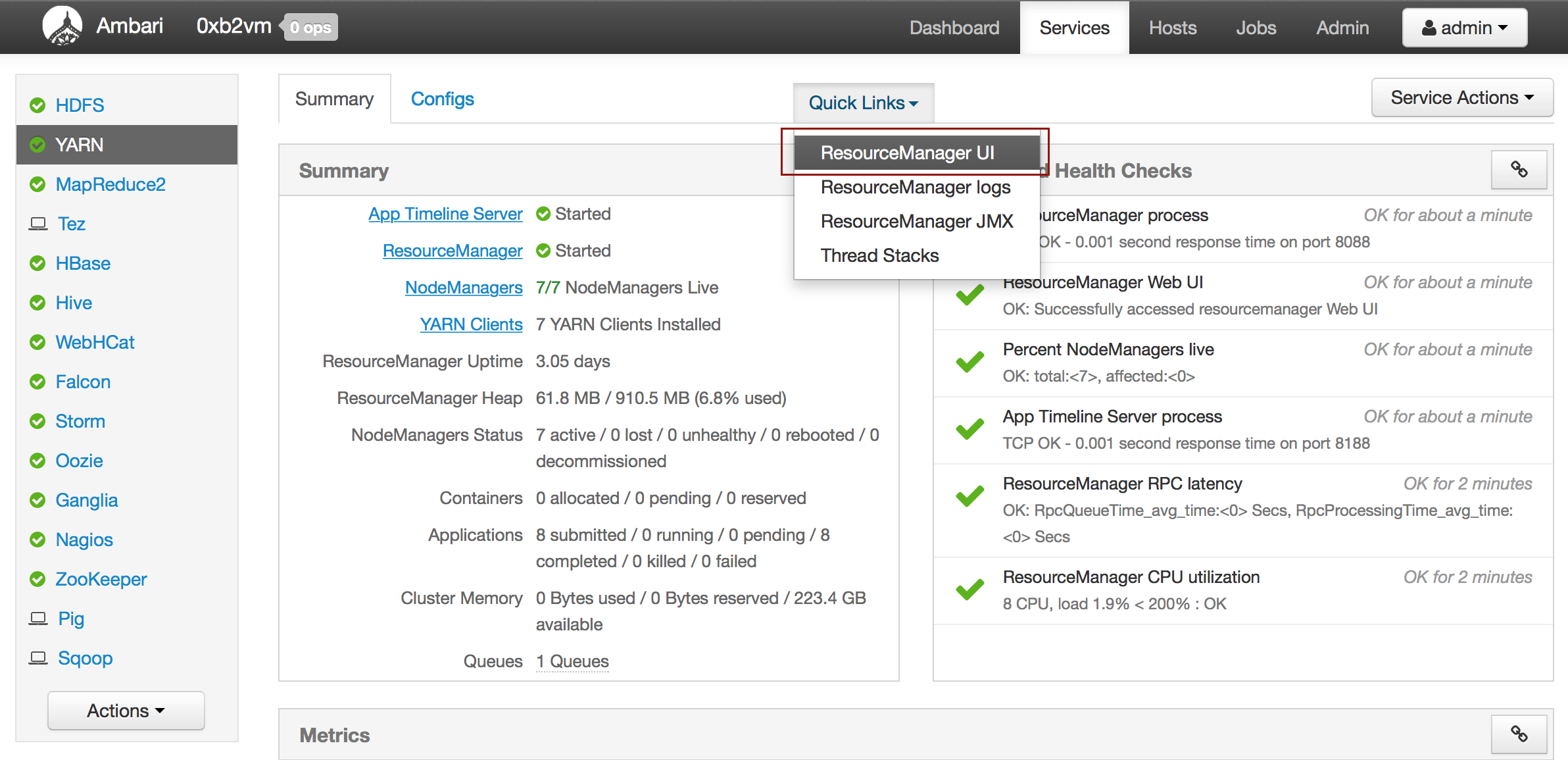

… On Hadoop

Currently supported versions:

- CDH 5.2

- CDH 5.3

- HDP 2.1

- HDP 2.2

- MapR 3.1.1

- MapR 4.0.1

Important Points to Remember:

- Each H2O node runs as a mapper

- Run only one mapper per host

- There are no combiners or reducers

- Each H2O cluster must have a unique job name

-mapperXmx,-nodes, and-outputare required- Root permissions are not required - just unzip the H2O .zip file on any single node

Prerequisite: Open Communication Paths

H2O communicates using two communication paths. Verify these are open and available for use by H2O.

Path 1: mapper to driver

Optionally specify this port using the -driverport option in the hadoop jar command (see “Hadoop Launch Parameters” below). This port is opened on the driver host (the host where you entered the hadoop jar command). By default, this port is chosen randomly by the operating system.

Path 2: mapper to mapper

Optionally specify this port using the -baseport option in the hadoop jar command (see “Hadoop Launch Parameters” below). This port and the next subsequent port are opened on the mapper hosts (the Hadoop worker nodes) where the H2O mapper nodes are placed by the Resource Manager. By default, ports 54321 (TCP) and 54322 (TCP & UDP) are used.

The mapper port is adaptive: if 54321 and 54322 are not available, H2O will try 54323 and 54324 and so on. The mapper port is designed to be adaptive because sometimes if the YARN cluster is low on resources, YARN will place two H2O mappers for the same H2O cluster request on the same physical host. For this reason, we recommend opening a range of more than two ports (20 ports should be sufficient).

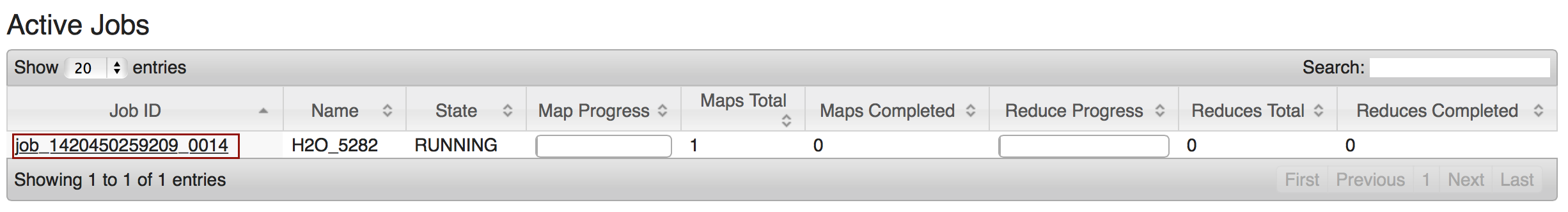

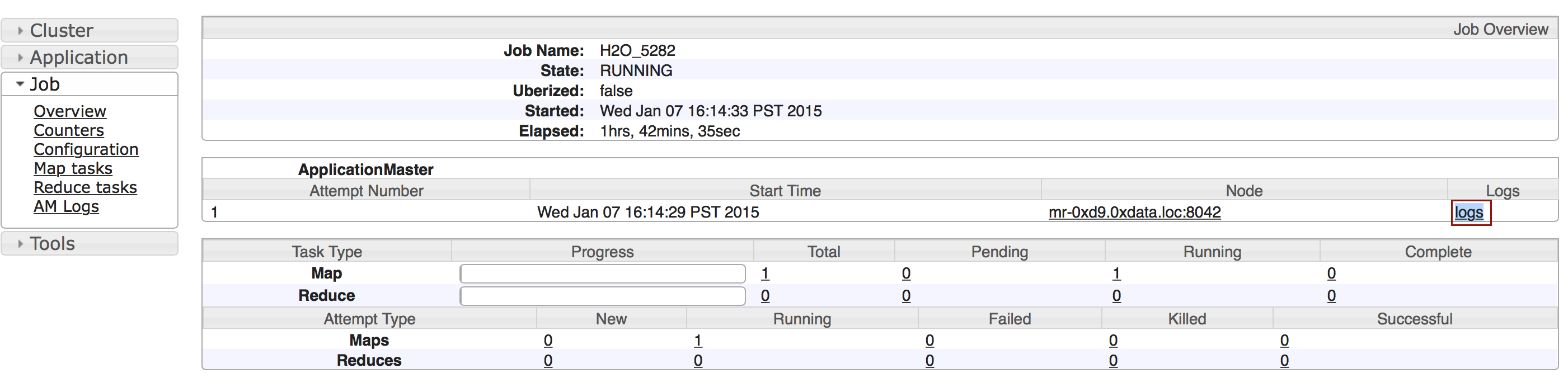

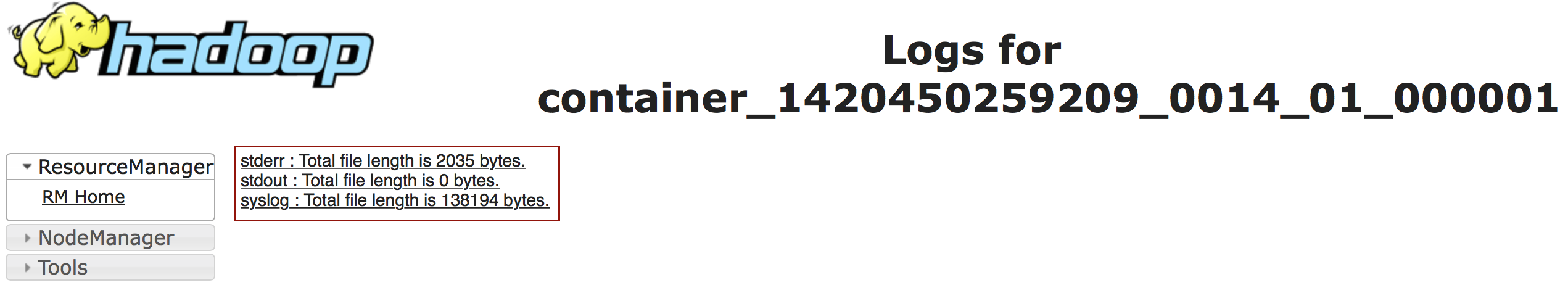

Tutorial

The following tutorial will walk the user through the download or build of H2O and the parameters involved in launching H2O from the command line.

Download the latest H2O release for your version of Hadoop:

wget http://h2o-release.s3.amazonaws.com/h2o/master/1110/h2o-0.3.0.1110-cdh5.2.zip wget http://h2o-release.s3.amazonaws.com/h2o/master/1110/h2o-0.3.0.1110-cdh5.3.zip wget http://h2o-release.s3.amazonaws.com/h2o/master/1110/h2o-0.3.0.1110-hdp2.1.zip wget http://h2o-release.s3.amazonaws.com/h2o/master/1110/h2o-0.3.0.1110-hdp2.2.zip wget http://h2o-release.s3.amazonaws.com/h2o/master/1110/h2o-0.3.0.1110-mapr3.1.1.zip wget http://h2o-release.s3.amazonaws.com/h2o/master/1110/h2o-0.3.0.1110-mapr4.0.1.zipNote: Enter only one of the above commands.

Prepare the job input on the Hadoop Node by unzipping the build file and changing to the directory with the Hadoop and H2O’s driver jar files.

unzip h2o-0.3.0.1110-*.zip cd h2o-0.3.0.1110-*To launch H2O nodes and form a cluster on the Hadoop cluster, run:

hadoop jar h2odriver.jar -nodes 1 -mapperXmx 1g -output hdfsOutputDirNameThe above command launches a 1g node of H2O. We recommend you launch the cluster with at least four times the memory of your data file size.

mapperXmx is the mapper size or the amount of memory allocated to each node.

nodes is the number of nodes requested to form the cluster.

output is the name of the directory created each time a H2O cloud is created so it is necessary for the name to be unique each time it is launched.

To monitor your job, direct your web browser to your standard job tracker Web UI. To access H2O’s Web UI, direct your web browser to one of the launched instances. If you are unsure where your JVM is launched, review the output from your command after the nodes has clouded up and formed a cluster. Any of the nodes’ IP addresses will work as there is no master node.

Determining driver host interface for mapper->driver callback... [Possible callback IP address: 172.16.2.181] [Possible callback IP address: 127.0.0.1] ... Waiting for H2O cluster to come up... H2O node 172.16.2.184:54321 requested flatfile Sending flatfiles to nodes... [Sending flatfile to node 172.16.2.184:54321] H2O node 172.16.2.184:54321 reports H2O cluster size 1 H2O cluster (1 nodes) is up Blocking until the H2O cluster shuts down...

Hadoop Launch Parameters

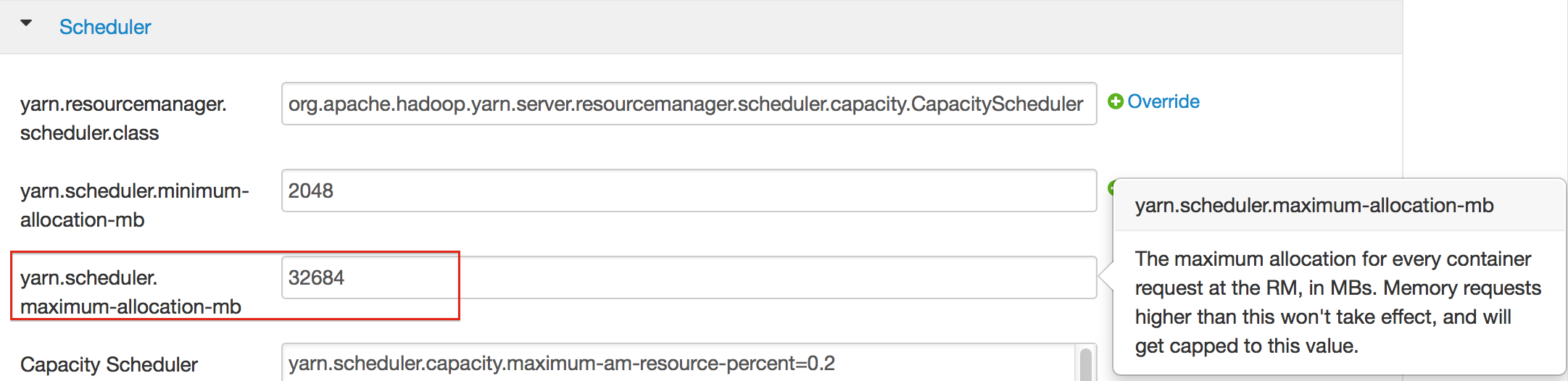

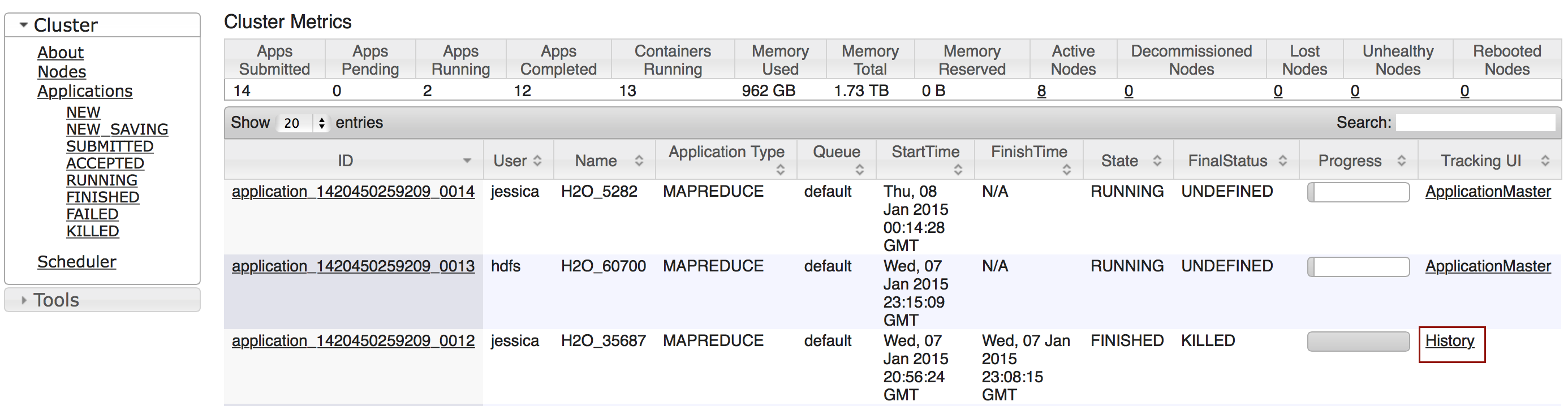

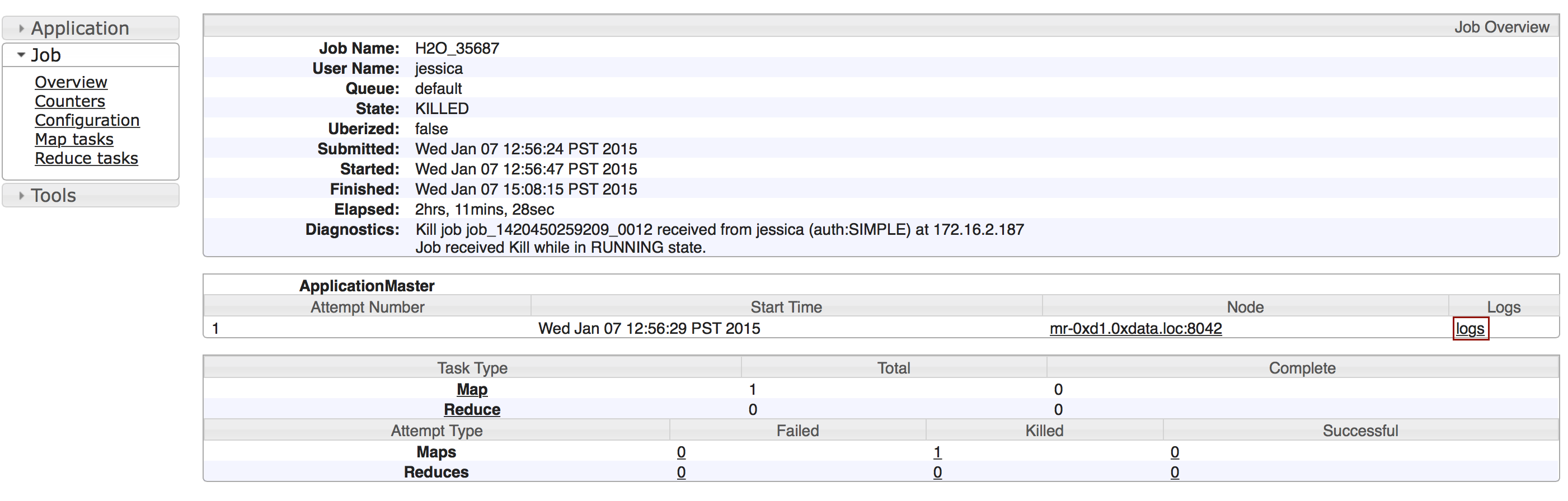

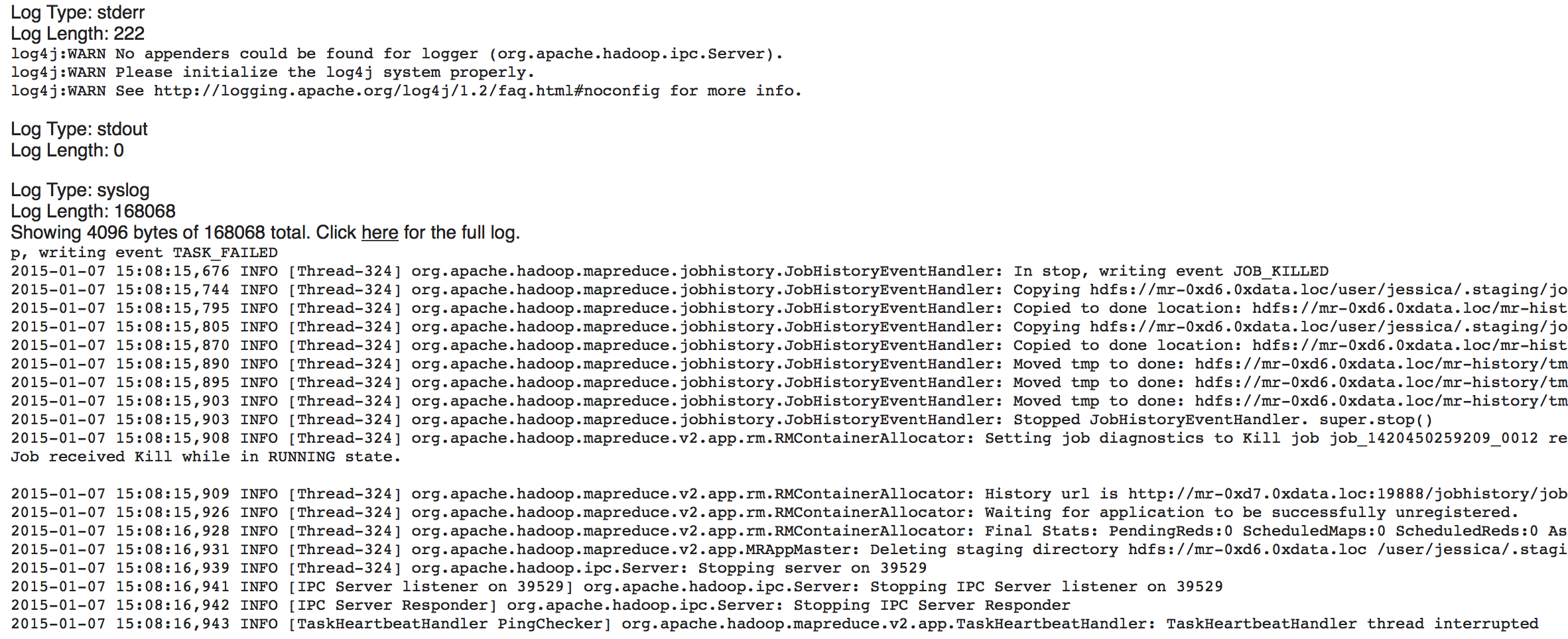

-h | -help: Display help-jobname <JobName>: Specify a job name for the Jobtracker to use; the default isH2O_nnnnn(where n is chosen randomly)-driverif <IP address of mapper -> driver callback interface>: Specify the IP address for callback messages from the mapper to the driver.-driverport <port of mapper -> callback interface>: Specify the port number for callback messages from the mapper to the driver.-network <IPv4Network1>[,<IPv4Network2>]: Specify the IPv4 network(s) to bind to the H2O nodes; multiple networks can be specified to force H2O to use the specified host in the Hadoop cluster.10.1.2.0/24allows 256 possibilities.-timeout <seconds>: Specify the timeout duration (in seconds) to wait for the cluster to form before failing.-disown: Exit the driver after the cluster forms.notify <notification file name>: Specify a file to write when the cluster is up. The file contains the IP and port of the embedded web server for one of the nodes in the cluster. All mappers must start before the H2O cloud is considered “up”.mapperXmx <per mapper Java Xmx heap size>: Specify the amount of memory to allocate to H2O.extramempercent <0-20>: Specify the extra memory for internal JVM use outside of the Java heap. This is a percentage ofmapperXmx.-n | -nodes <number of H2O nodes>: Specify the number of nodes.-nthreads <maximum number of CPUs>: Specify the number of CPUs to use. Enter-1to use all CPUs on the host, or enter a positive integer.-baseport <initialization port for H2O nodes>: Specify the initialization port for the H2O nodes. The default is54321.-ea: Enable assertions to verify boolean expressions for error detection.-verbose:gc: Include heap and garbage collection information in the logs.-XX:+PrintGCDetails: Include a short message after each garbage collection.-license <license file name>: Specify the directory of local filesytem location and the license file name.-o | -output <HDFS output directory>: Specify the HDFS directory for the output.-flow_dir <Saved Flows directory>: Specify the directory for saved flows. By default, H2O will try to find the HDFS home directory to use as the directory for flows. If the HDFS home directory is not found, flows cannot be saved unless a directory is specified using-flow_dir.

Accessing S3 Data from Hadoop

H2O launched on Hadoop can access S3 Data in addition to to HDFS. To enable access, follow the instructions below.

Edit Hadoop’s core-site.xml, then set the HADOOP_CONF_DIR environment property to the directory containing the core-site.xml file. For an example core-site.xml file, refer to Core-site.xml. Typically, the configuration directory for most Hadoop distributions is /etc/hadoop/conf.

You can also pass the S3 credentials when launching H2O with the Hadoop jar command. Use the -D flag to pass the credentials:

hadoop jar h2odriver.jar -Dfs.s3.awsAccessKeyId="${AWS_ACCESS_KEY}" -Dfs.s3n.awsSecretAccessKey="${AWS_SECRET_KEY}" -n 3 -mapperXmx 10g -output outputDirectory

where AWS_ACCESS_KEY represents your user name and AWS_SECRET_KEY represents your password.

Then import the data with the S3 URL path:

To import the data from the Flow API:

importFiles [ "s3n://bucket/path/to/file.csv" ]To import the data from the R API:

h2o.importFile(path = "s3n://bucket/path/to/file.csv")To import the data from the Python API:

h2o.import_frame(path = "s3n://bucket/path/to/file.csv")

… Using Docker

This walkthrough describes:

- Installing Docker on Mac or Linux OS

- Creating and modifying the Dockerfile

- Building a Docker image from the Dockerfile

- Running the Docker build

- Launching H2O

- Accessing H2O from the web browser or R

Walkthrough

Prerequisites

Linux kernel version 3.8+

or

Mac OS X 10.6+

Note: Older Linux kernel versions are known to cause kernel panics and to break Docker; there are ways around it, but attempt at your own risk.

You can check the version of your kernel by running uname -r in your terminal. The following walkthrough has been tested on a Mac OS X 10.10.1.

Step 1 - Install and Launch Docker

Step 2 - Create or Download Dockerfile

Create a folder on the Host OS to host your Dockerfile by running:

mkdir -p /data/h2o-shannon

Then either download or create a Dockerfile. The Dockerfile is essentially a build recipe that will be used to build the container.

Download and use our Dockerfile template by running:

cd /data/h2o-shannon

wget http://h2o.ai/blog/2015_01_h2o-docker/Dockerfile

The Dockerfile will:

- Pull and update the base image (Ubuntu 14.04)

- Install Java 7

- Fetch and download the H2O Shannon build from H2O’s S3 repository

- Expose port 54321 and 54322 in preparation for launching H2O on those ports

Step 3 - Build Docker image from Dockerfile

From the /data/h2o-shannon directory, run:

docker build -t="h2o.ai/shannon" .

This process can take a few minutes as it assembles all the necessary parts to the image.

Step 4 - Run Docker Build

On a Mac, you must use the argument -p 54321:54321 to expressly map the port 54321. This is redundant on Linux.

docker run -it -p 54321:54321 h2o.ai/shannon

Step 5 - Launch H2O

Step into the /opt directory and launch H2O. Change the value of -Xmx to the amount of memory you want to allocate to the H2O instance. By default, H2O launches on port 54321.

cd /opt

java -Xmx1g -jar h2o.jar

Step 6 - Access H2O from the web browser or R

- On Linux, when H2O finishes launching, you can copy and paste the IP address and port of the H2O instance. In the following example, that would be 172.17.0.5:54321.

03:58:25.963 main INFO WATER: Cloud of size 1 formed [/172.17.0.5:54321 (00:00:00.000)]

- If it is running on a Mac, you will need to find the IP address of the Docker’s network that bridges to your Host OS. To do this, open a new terminal (not a bash for your container) and run

boot2docker ip.

$ boot2docker ip

192.168.59.103

Once you have the IP address, point your browser to the specified ip address and port. In R, you can access the instance by installing the latest version of the H2O R package and running:

library(h2o)

dockerH2O <- h2o.init(ip = "192.168.59.103", port = 54321)

Flow Web UI …

H2O Flow is an open-source user interface for H2O. It is a web-based interactive environment that allows you to combine code execution, text, mathematics, plots, and rich media in a single document, similar to iPython Notebooks.

With H2O Flow, you can capture, rerun, annotate, present, and share your workflow. H2O Flow allows you to use H2O interactively to import files, build models, and iteratively improve them. Based on your models, you can make predictions and add rich text to create vignettes of your work - all within Flow’s browser-based environment.

Flow’s hybrid user interface seamlessly blends command-line computing with a modern graphical user interface. However, rather than displaying output as plain text, Flow provides a point-and-click user interface for every H2O operation. It allows you to access any H2O object in the form of well-organized tabular data.

H2O Flow sends commands to H2O as a sequence of executable cells. The cells can be modified, rearranged, or saved to a library. Each cell contains an input field that allows you to enter commands, define functions, call other functions, and access other cells or objects on the page. When you execute the cell, the output is a graphical object, which can be inspected to view additional details.

While H2O Flow supports REST API, R scripts, and CoffeeScript, no programming experience is required to run H2O Flow. You can click your way through any H2O operation without ever writing a single line of code. You can even disable the input cells to run H2O Flow using only the GUI. H2O Flow is designed to guide you every step of the way, by providing input prompts, interactive help, and example flows.

Introduction

This guide will walk you through how to use H2O’s web UI, H2O Flow. To view a demo video of H2O Flow, click here.

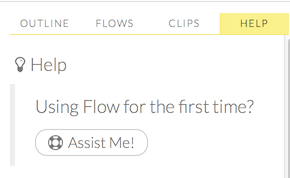

Getting Help

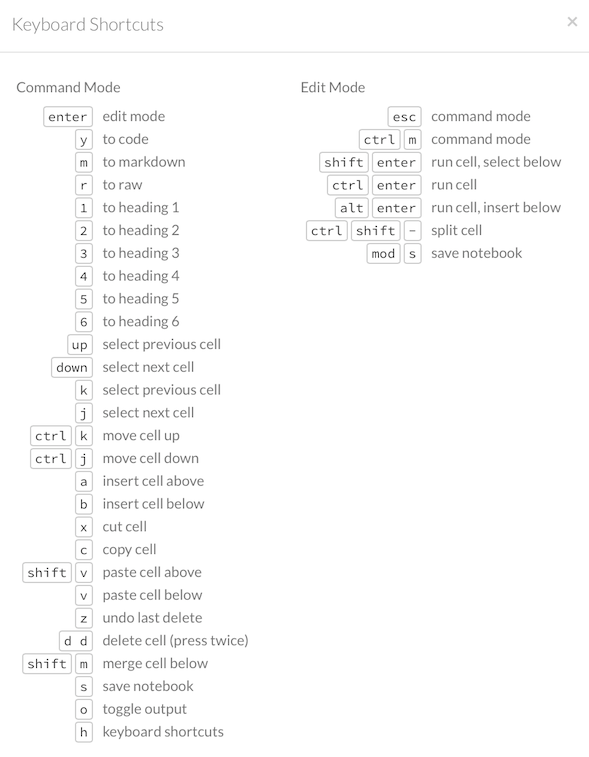

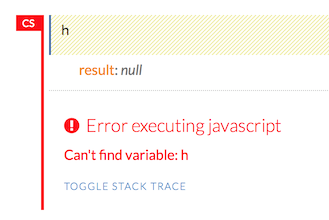

First, let’s go over the basics. Type h to view a list of helpful shortcuts.

The following help window displays:

To close this window, click the X in the upper-right corner, or click the Close button in the lower-right corner. You can also click behind the window to close it. You can also access this list of shortcuts by clicking the Help menu and selecting Keyboard Shortcuts.

For additional help, select the Help sidebar to the right and click the Assist Me! button.

You can also type assist in a blank cell and press Ctrl+Enter. A list of common tasks displays to help you find the correct command.

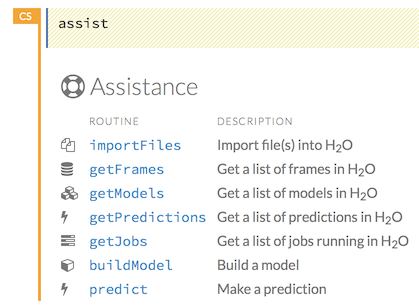

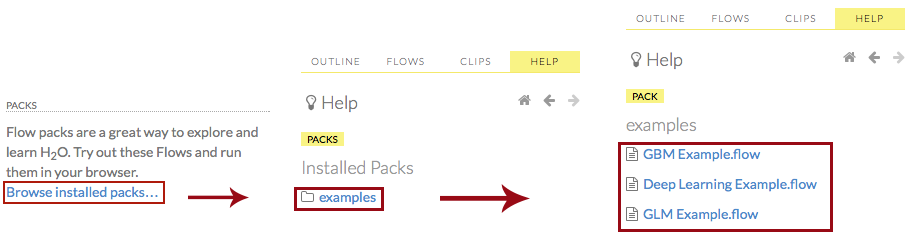

There are multiple resources to help you get started with Flow in the Help sidebar. To access this document, select the Getting Started with H2O Flow link below the Help Topics heading.

You can also explore the pre-configured flows available in H2O Flow for a demonstration of how to create a flow. To view the example flows, click the Browse installed packs… link in the Packs subsection of the Help sidebar. Click the examples folder and select the example flow from the list.

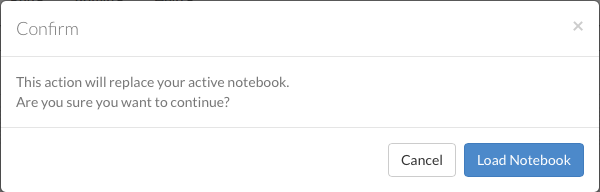

If you have a flow currently open, a confirmation window appears asking if the current notebook should be replaced. To load the example flow, click the Load Notebook button.

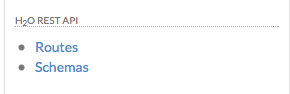

To view the REST API documentation, click the Help tab in the sidebar and then select the type of REST API documentation (Routes or Schemas).

Before getting started with H2O Flow, make sure you understand the different cell modes.

Understanding Cell Modes

There are two modes for cells: edit and command.

Using Edit Mode

In edit mode, the cell is yellow with a blinking bar to indicate where text can be entered and there is an orange flag to the left of the cell.

Using Command Mode

In command mode, the flag is yellow. The flag also indicates the cell’s format:

MD: Markdown

Note: Markdown formatting is not applied until you run the cell by clicking the Run button or clicking the Run menu and selecting Run.

CS: Code (default)

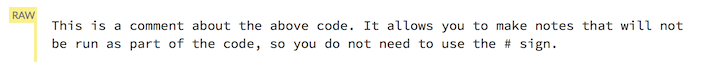

RAW: Raw format (for code comments)

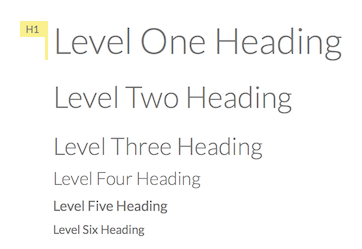

H[1-6]: Heading level (where 1 is a first-level heading)

NOTE: If there is an error in the cell, the flag is red.

If the cell is executing commands, the flag is teal. The flag returns to yellow when the task is complete.

Changing Cell Formats

To change the cell’s format (for example, from code to Markdown), make sure you are in not in command (not edit) mode and that the cell you want to change is selected. The easiest way to do this is to click on the flag to the left of the cell. Enter the keyboard shortcut for the format you want to use. The flag’s text changes to display the current format.

| Cell Mode | Keyboard Shortcut |

|---|---|

| Code | y |

| Markdown | m |

| Raw text | r |

| Heading 1 | 1 |

| Heading 2 | 2 |

| Heading 3 | 3 |

| Heading 4 | 4 |

| Heading 5 | 5 |

| Heading 6 | 6 |

Running Flows

When you run the flow, a progress bar that indicates the current status of the flow. You can cancel the currently running flow by clicking the Stop button in the progress bar.

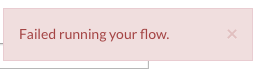

When the flow is complete, a message displays in the upper right. Note: If there is an error in the flow, H2O Flow stops the flow at the cell that contains the error.

Using Keyboard Shortcuts

Here are some important keyboard shortcuts to remember:

- Click a cell and press Enter to enter edit mode, which allows you to change the contents of a cell.

- To exit edit mode, press Esc.

- To execute the contents of a cell, press the Ctrl and Enter buttons at the same time.

The following commands must be entered in command mode.

- To add a new cell above the current cell, press a.

- To add a new cell below the current cell, press b.

- To delete the current cell, press the d key twice. (dd).

You can view these shortcuts by clicking Help > Keyboard Shortcuts or by clicking the Help tab in the sidebar.

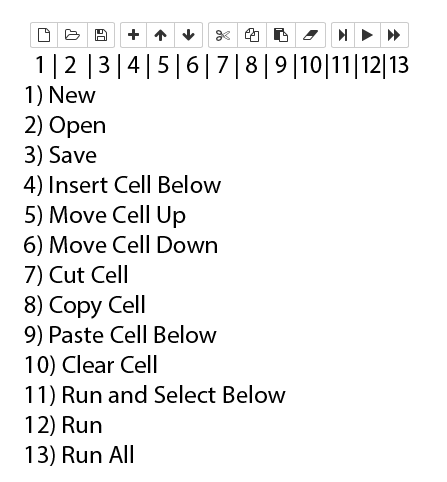

Using Flow Buttons

There are also a series of buttons at the top of the page below the flow name that allow you to save the current flow, add a new cell, move cells up or down, run the current cell, and cut, copy, or paste the current cell. If you hover over the button, a description of the button’s function displays.

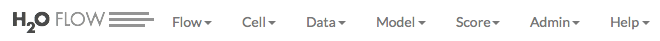

You can also use the menus at the top of the screen to edit the order of the cells, toggle specific format types (such as input or output), create models, or score models. You can also access troubleshooting information or obtain help with Flow.

Note: To disable the code input and use H2O Flow strictly as a GUI, click the Cell menu, then Toggle Cell Input.

Now that you are familiar with the cell modes, let’s import some data.

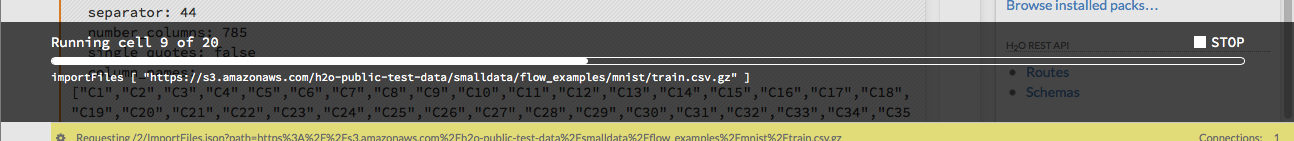

… Importing Data

If you don’t have any of your own data to work with, you can find some example datasets here:

There are multiple ways to import data in H2O flow:

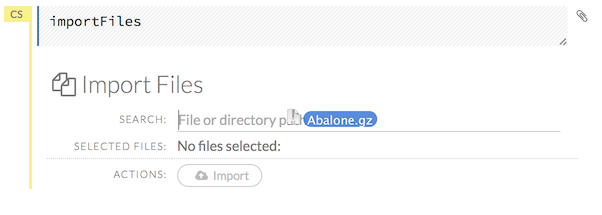

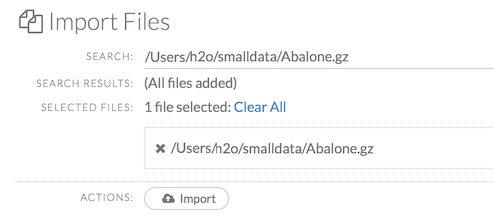

Click the Assist Me! button in the Help sidebar, then click the importFiles link. Enter the file path in the auto-completing Search entry field and press Enter. Select the file from the search results and select it by clicking the Add All link.

You can also drag and drop the file onto the Search field in the cell.

In a blank cell, select the CS format, then enter

importFiles ["path/filename.format"](wherepath/filename.formatrepresents the complete file path to the file, including the full file name. The file path can be a local file path or a website address.

After selecting the file to import, the file path displays in the “Search Results” section. To import a single file, click the plus sign next to the file. To import all files in the search results, click the Add all link. The files selected for import display in the “Selected Files” section.

Note: If the file is compressed, it will only be read using a single thread. For best performance, we recommend uncompressing the file before importing, as this will allow use of the faster multithreaded distributed parallel reader during import.

To import the selected file(s), click the Import button.

To remove all files from the “Selected Files” list, click the Clear All link.

To remove a specific file, click the X next to the file path.

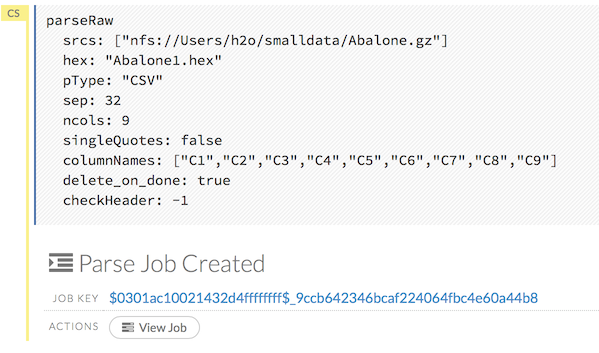

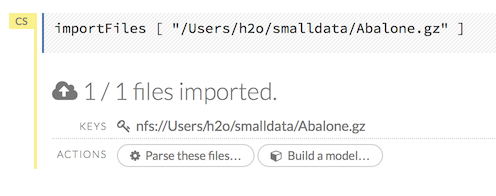

After you click the Import button, the raw code for the current job displays. A summary displays the results of the file import, including the number of imported files and their Network File System (nfs) locations.

Uploading Data

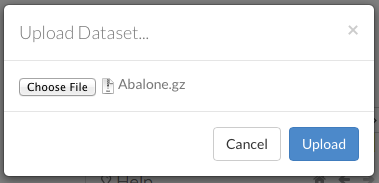

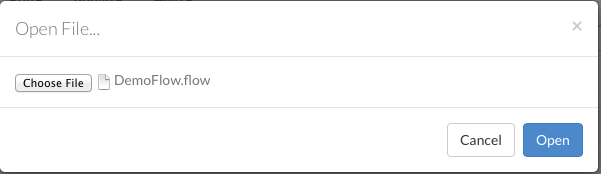

To upload a local file, click the Data menu and select Upload File…. Click the Choose File button, select the file, click the Choose button, then click the Upload button.

When the file has uploaded successfully, a message displays in the upper right and the Setup Parse cell displays.

Ok, now that your data is available in H2O Flow, let’s move on to the next step: parsing. Click the Parse these files button to continue.

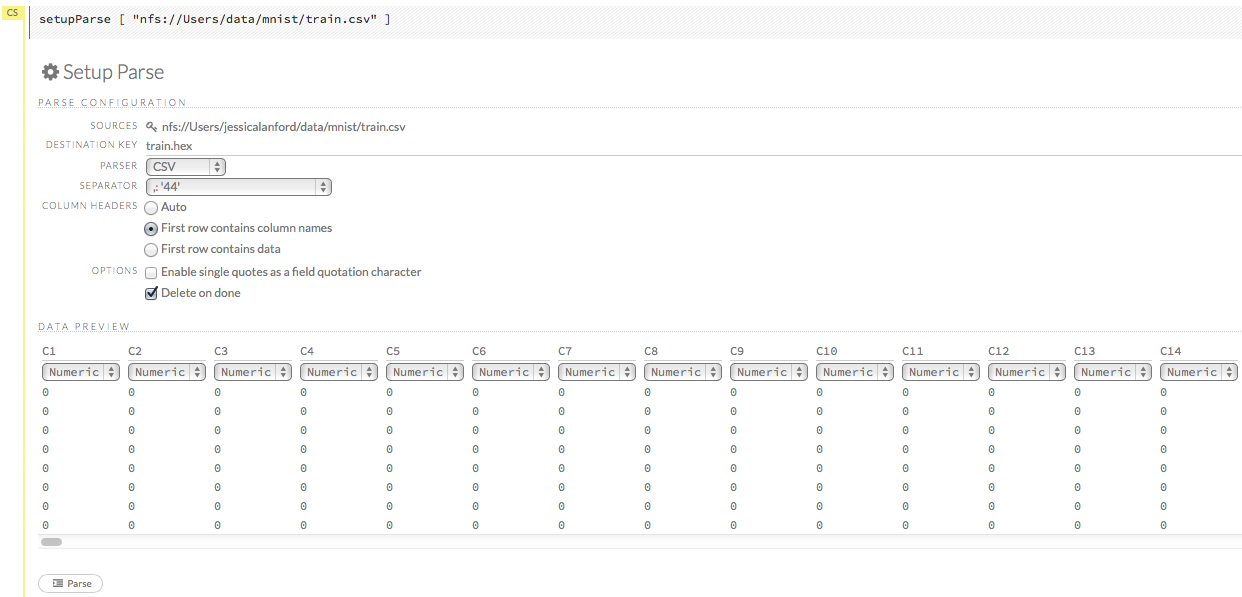

Parsing Data

After you have imported your data, parse the data.

Select the parser type (if necessary) from the drop-down Parser list. For most data parsing, H2O automatically recognizes the data type, so the default settings typically do not need to be changed. The following options are available:

- Auto

- ARFF

- XLS

- XLSX

- CSV

- SVMLight

If a separator or delimiter is used, select it from the Separator list.

Select a column header option, if applicable:

- Auto: Automatically detect header types.

- First row contains column names: Specify heading as column names.

- First row contains data: Specify heading as data. This option is selected by default.

Select any necessary additional options:

- Enable single quotes as a field quotation character: Treat single quote marks (also known as apostrophes) in the data as a character, rather than an enum. This option is not selected by default.

- Delete on done: Check this checkbox to delete the imported data after parsing. This option is selected by default.

A preview of the data displays in the “Data Preview” section.

Note: To change the column type, select the drop-down list at the top of the column and select the data type. The options are:

- Unknown

- Numeric

- Enum

- Time

- UUID

- String

- Invalid

After making your selections, click the Parse button.

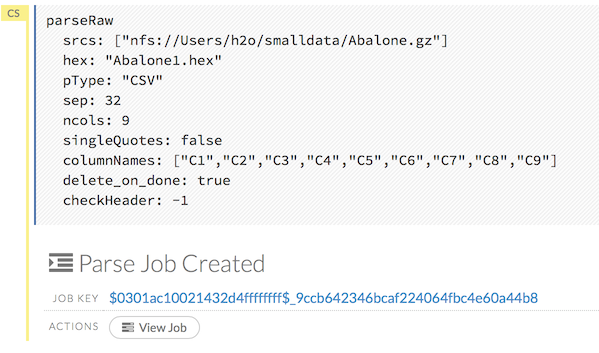

After you click the Parse button, the code for the current job displays.

Since we’ve submitted a couple of jobs (data import & parse) to H2O now, let’s take a moment to learn more about jobs in H2O.

Viewing Jobs

Any command (such as importFiles) you enter in H2O is submitted as a job, which is associated with a key. The key identifies the job within H2O and is used as a reference.

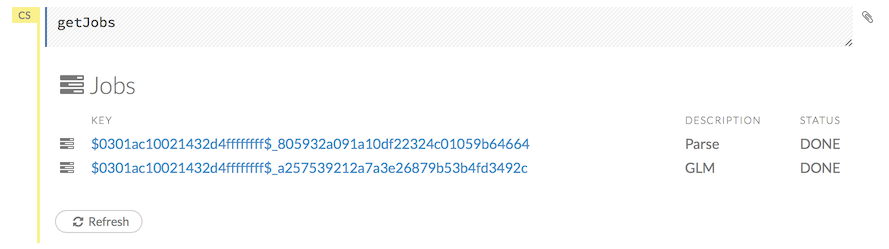

Viewing All Jobs

To view all jobs, click the Admin menu, then click Jobs, or enter getJobs in a cell in CS mode.

The following information displays:

- Type (for example,

FrameorModel) - Link to the object

- Description of the job type (for example,

ParseorGBM) - Start time

- End time

- Run time

To refresh this information, click the Refresh button. To view the details of the job, click the View button.

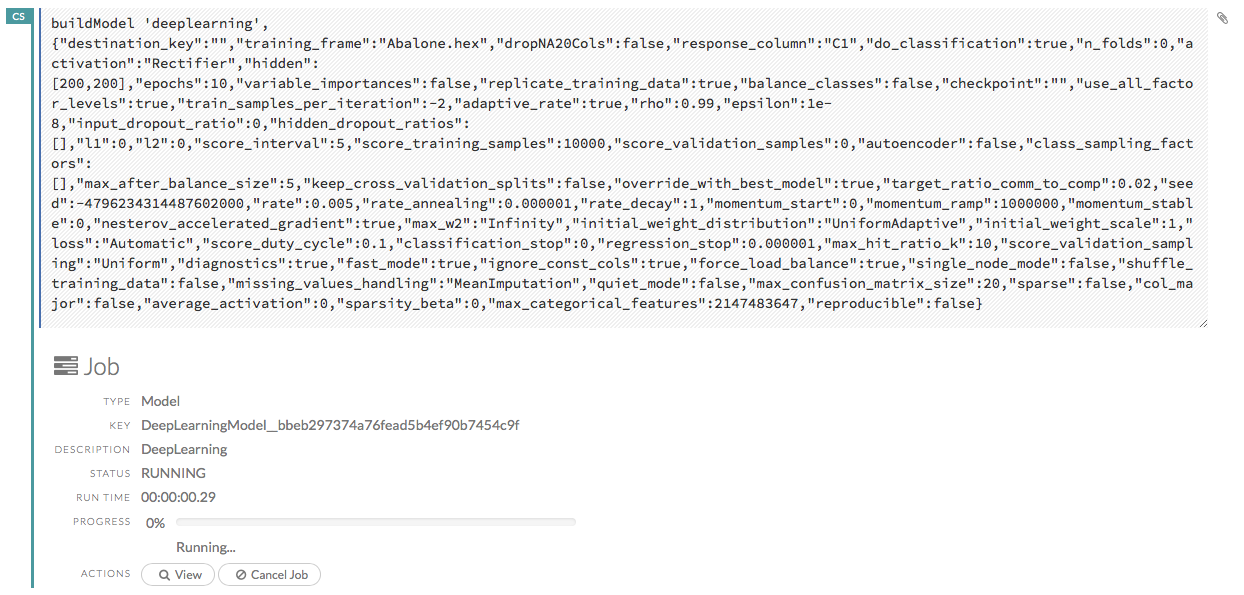

Viewing Specific Jobs

To view a specific job, click the link in the “Destination” column.

The following information displays:

- Type (for example,

Frame) - Link to object (key)

- Description (for example,

Parse) - Status

- Run time

- Progress

NOTE: For a better understanding of how jobs work, make sure to review the Viewing Frames section as well.

Ok, now that you understand how to find jobs in H2O, let’s submit a new one by building a model.

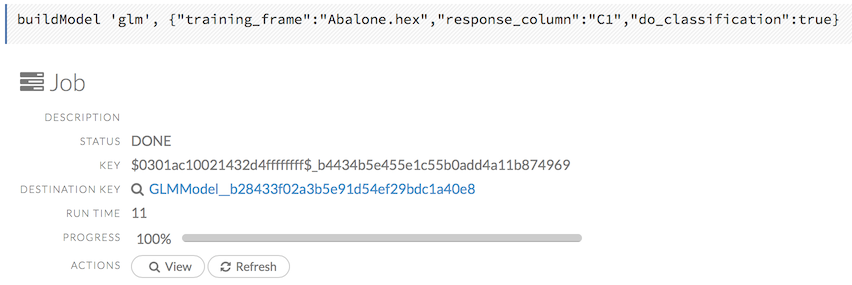

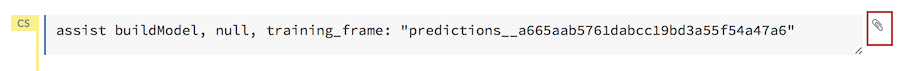

… Building Models

To build a model:

Click the Assist Me! button and select buildModel

or

Click the Assist Me! button, select getFrames, then click the Build Model… button below the parsed .hex data set

or

Click the View button after parsing data, then click the Build Model button

or

Click the drop-down Model menu and select the model type from the list

The Build Model… button can be accessed from any page containing the .hex key for the parsed data (for example, getJobs > getFrame).

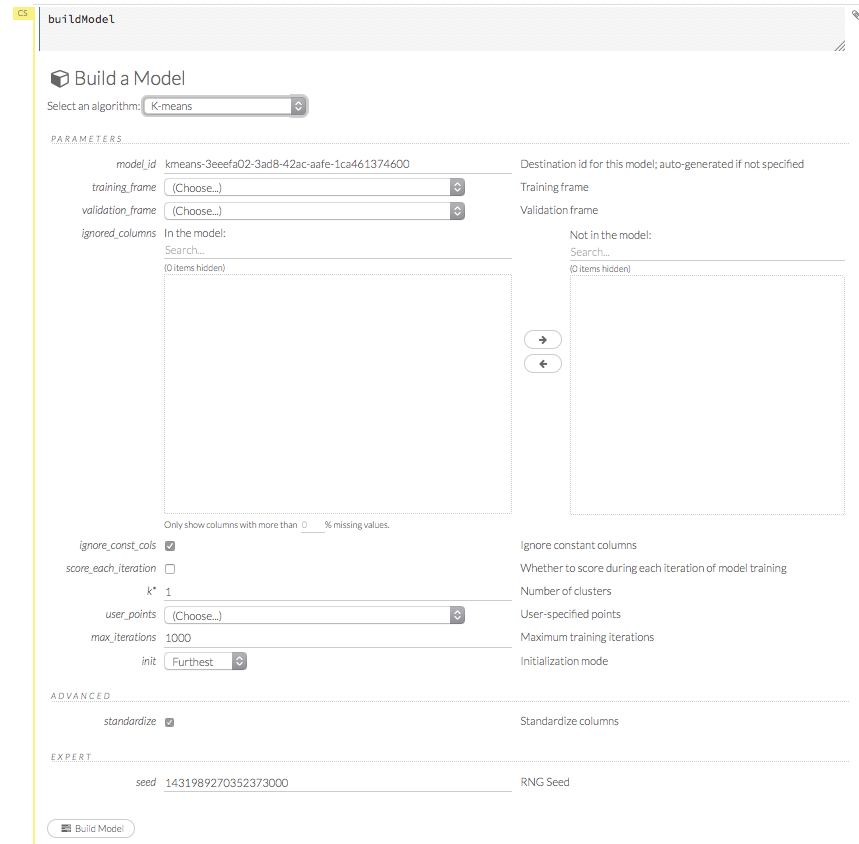

In the Build a Model cell, select an algorithm from the drop-down menu:

- K-means: Create a K-Means model.

- Generalized Linear Model: Create a Generalized Linear model.

- Distributed RF: Create a distributed Random Forest model.

- Naïve Bayes: Create a Naïve Bayes model.

- Principal Component Analysis: Create a Principal Components Analysis model for modeling without regularization or performing dimensionality reduction.

- Gradient Boosting Machine: Create a Gradient Boosted model

- Deep Learning: Create a Deep Learning model.

The available options vary depending on the selected model. If an option is only available for a specific model type, the model type is listed. If no model type is specified, the option is applicable to all model types.

Model_ID: (Optional) Enter a custom name for the model to use as a reference. By default, H2O automatically generates an ID containing the model type (for example,

gbm-6f6bdc8b-ccbc-474a-b590-4579eea44596).Training_frame: (Required) Select the dataset used to build the model.

NOTE: If you click the Build a model button from the

Parsecell, the training frame is entered automatically.Validation_frame: (Optional) Select the dataset used to evaluate the accuracy of the model.

Ignored_columns: (Optional) Click the plus sign next to a column name to add it to the list of columns excluded from the model. To add all columns, click the -> button. To remove a column from the list of ignored columns, click the X next to the column name. To remove all columns from the list of ignored columns, click the <- button. To search for a specific column, type the column name in the Search field above the column list. To only show columns with a specific percentage of missing values, specify the percentage in the Only show columns with more than 0% missing values field.

User_points: (K-Means, PCA) For K-Means, specify the number of initial cluster centers. For PCA, specify the initial Y matrix. Note: The PCA User_points parameter should only be used by advanced users for testing purposes.

Transform: (PCA) Select the transformation method for the training data: None, Standardize, Normalize, Demean, or Descale. The default is None.

Response_column: (Required for GLM, GBM, DL, DRF, Naïve Bayes) Select the column to use as the independent variable.

Solver: (GLM) Select the solver to use (IRLSM, L_BFGS, or auto). IRLSM is fast on on problems with small number of predictors and for lambda-search with L1 penalty, while L_BFGS scales better for datasets with many columns. The default is IRLSM.

Ntrees: (GBM, DRF) Specify the number of trees. The default value is 50.

Max_depth: (GBM, DRF) Specify the maximum tree depth. For GBM, the default value is 5. For DRF, the default value is 20.

Min_rows: (GBM), (DRF) Specify the minimum number of observations for a leaf (“nodesize” in R). For Grid Search, use comma-separated values. The default value is 10.

Nbins: (GBM, DRF) Specify the number of bins for the histogram. The default value is 20.

Mtries: (DRF) Specify the columns to randomly select at each level. To use the square root of the columns, enter

-1. The default value is -1.Sample_rate: (DRF) Specify the sample rate. The range is 0 to 1.0 and the default value is 0.632.

Build_tree_one_node: (DRF) To run on a single node, check this checkbox. This is suitable for small datasets as there is no network overhead but fewer CPUs are used. The default setting is disabled.

Learn_rate: (GBM) Specify the learning rate. The range is 0.0 to 1.0 and the default is 0.1.

Distribution: (GBM) Select the distribution type from the drop-down list. The options are auto, bernoulli, multinomial, or gaussian and the default is auto.

Loss: (DL) Select the loss function. For DL, the options are Automatic, MeanSquare, CrossEntropy, Huber, or Absolute and the default value is Automatic. Absolute, MeanSquare, and Huber are applicable for regression or classification, while CrossEntropy is only applicable for classification. Huber can improve for regression problems with outliers.

Score_each_iteration: (K-Means, DRF, Naïve Bayes, PCA, GBM) To score during each iteration of the model training, check this checkbox.

K: (K-Means), (PCA) For K-Means, specify the number of clusters. For PCA, specify the rank of matrix approximation. The default for K-Means and PCA is 1.

Gamma: (PCA) Specify the regularization weight for PCA. The default is 0.

Max_iterations: (K-Means, PCA,GLM) Specify the number of training iterations. For K-Means and PCA, the default is 1000. For GLM, the default is -1.

Beta_epsilon: (GLM) Specify the beta epsilon value. If the L1 normalization of the current beta change is below this threshold, consider using convergence.

Init: (K-Means, PCA) Select the initialization mode. For K-Means, the options are Furthest, PlusPlus, Random, or User. For PCA, the options are PlusPlus, User, or None.

Note: If PlusPlus is selected, the initial Y matrix is chosen by the final cluster centers from the K-Means PlusPlus algorithm.

Family: (GLM) Select the model type (Gaussian, Binomial, Poisson, or Gamma).

Activation: (DL) Select the activation function (Tanh, TanhWithDropout, Rectifier, RectifierWithDropout, Maxout, MaxoutWithDropout). The default option is Rectifier.

Hidden: (DL) Specify the hidden layer sizes (e.g., 100,100). For Grid Search, use comma-separated values: (10,10),(20,20,20). The default value is [200,200]. The specified value(s) must be positive.

Epochs: (DL) Specify the number of times to iterate (stream) the dataset. The value can be a fraction. The default value for DL is 10.0.

Variable_importances: (DL) Check this checkbox to compute variable importance. This option is not selected by default.

Laplace: (Naïve Bayes) Specify the Laplace smoothing parameter. The default value is 0.

Min_sdev: (Naïve Bayes) Specify the minimum standard deviation to use for observations without enough data. The default value is 0.001.

Eps_sdev: (Naïve Bayes) Specify the threshold for standard deviation. If this threshold is not met, the min_sdev value is used. The default value is 0.

Min_prob: (Naïve Bayes) Specify the minimum probability to use for observations without enough data. The default value is 0.001.

Eps_prob: (Naïve Bayes) Specify the threshold for standard deviation. If this threshold is not met, the min_sdev value is used. The default value is 0.

Standardize: (K-Means, GLM) To standardize the numeric columns to have mean of zero and unit variance, check this checkbox. Standardization is highly recommended; if you do not use standardization, the results can include components that are dominated by variables that appear to have larger variances relative to other attributes as a matter of scale, rather than true contribution. This option is selected by default.

Beta_constraints: (GLM)To use beta constraints, select a dataset from the drop-down menu. The selected frame is used to constraint the coefficient vector to provide upper and lower bounds.

Advanced Options

Checkpoint: (DL) Enter a model key associated with a previously-trained Deep Learning model. Use this option to build a new model as a continuation of a previously-generated model (e.g., by a grid search).

Use_all_factor_levels: (DL) Check this checkbox to use all factor levels in the possible set of predictors; if you enable this option, sufficient regularization is required. By default, the first factor level is skipped. For Deep Learning models, this option is useful for determining variable importances and is automatically enabled if the autoencoder is selected.

Train_samples_per_iteration: (DL) Specify the number of global training samples per MapReduce iteration. To specify one epoch, enter 0. To specify all available data (e.g., replicated training data), enter -1. To use the automatic values, enter -2. The default is -2.

Adaptive_rate: (DL) Check this checkbox to enable the adaptive learning rate (ADADELTA). This option is selected by default. If this option is enabled, the following parameters are ignored:

rate,rate_decay,rate_annealing,momentum_start,momentum_ramp,momentum_stable, andnesterov_accelerated_gradient.Input_dropout_ratio: (DL) Specify the input layer dropout ratio to improve generalization. Suggested values are 0.1 or 0.2. The range is >= 0 to <1 and the default value is 0.

L1: (DL) Specify the L1 regularization to add stability and improve generalization; sets the value of many weights to 0. The default value is 0.

L2: (DL) Specify the L2 regularization to add stability and improve generalization; sets the value of many weights to smaller values. The default value is 0.

Score_interval: (DL) Specify the shortest time interval (in seconds) to wait between model scoring. The default value is 5.

Score_training_samples: (DL) Specify the number of training set samples for scoring. To use all training samples, enter 0. The default value is 10000.

Score_validation_samples: (DL) (Requires selection from the Validation_Frame drop-down list) Specify the number of validation set samples for scoring. To use all validation set samples, enter 0. The default value is 0. This option is applicable to classification only.

Score_duty_cycle: (DL) Specify the maximum duty cycle fraction for scoring. A lower value results in more training and a higher value results in more scoring. The default value is 0.1.

Autoencoder: (DL) Check this checkbox to enable the Deep Learning autoencoder. This option is not selected by default. Note: This option requires a loss function other than CrossEntropy. If this option is enabled, use_all_factor_levels must be enabled.

Balance_classes: (GLM, GBM, DRF, DL, Naïve Bayes) Oversample the minority classes to balance the class distribution. This option is not selected by default. This option is only applicable for classification. Majority classes can be undersampled to satisfy the Max_after_balance_size parameter.

Max_confusion_matrix_size: (DRF, Naïve Bayes, GBM) Specify the maximum size (in number of classes) for confusion matrices to be printed in the Logs.

Max_hit_ratio_k: (DRF, Naïve Bayes) Specify the maximum number (top K) of predictions to use for hit ratio computation. Applicable to multi-class only. To disable, enter 0.

Link: (GLM) Select a link function (Identity, Family_Default, Logit, Log, or Inverse).

Alpha: (GLM) Specify the regularization distribution between L2 and L2. The default value is 0.5.

Lambda: (GLM) Specify the regularization strength. There is no default value.

Lambda_search: (GLM) Check this checkbox to enable lambda search, starting with lambda max. The given lambda is then interpreted as lambda min.

Rate: (DL) Specify the learning rate. Higher rates result in less stable models and lower rates result in slower convergence. The default value is 0.005. Not applicable if adaptive_rate is enabled.

Rate_annealing: (DL) Specify the learning rate annealing. The formula is rate/(1+rate_annealing value * samples). The default value is 10.000001. Not applicable if adaptive_rate is enabled.

Momentum_start: (DL) Specify the initial momentum at the beginning of training. A suggested value is 0.5. The default value is 0. Not applicable if adaptive_rate is enabled.

Momentum_ramp: (DL) Specify the number of training samples for increasing the momentum. The default value is 1000000. Not applicable if adaptive_rate is enabled.

Momentum_stable: DL Specify the final momentum value reached after the momentum_ramp training samples. Not applicable if adaptive_rate is enabled.

Nesterov_accelerated_gradient: (DL) Check this checkbox to use the Nesterov accelerated gradient. This option is recommended and selected by default. Not applicable is adaptive_rate is enabled.

Hidden_dropout_ratios: (DL) Specify the hidden layer dropout ratios to improve generalization. Specify one value per hidden layer, each value between 0 and 1 (exclusive). There is no default value. This option is applicable only if TanhwithDropout, RectifierwithDropout, or MaxoutWithDropout is selected from the Activation drop-down list.

Expert Options

Keep_cross_validation_splits: (DL) Check this checkbox to keep the cross-validation frames. This option is not selected by default.

Overwrite_with_best_model: (DL) Check this checkbox to overwrite the final model with the best model found during training. This option is selected by default.

Target_ratio_comm_to_comp: (DL) Specify the target ratio of communication overhead to computation. This option is only enabled for multi-node operation and if train_samples_per_iteration equals -2 (auto-tuning). The default value is 0.02.

Rho: (DL) Specify the adaptive learning rate time decay factor. The default value is 0.99. This option is only applicable if adaptive_rate is enabled.

Epsilon: (DL) Specify the adaptive learning rate time smoothing factor to avoid dividing by zero. The default value is 1.0E-8. This option is only applicable if adaptive_rate is enabled.

Max_W2: (DL) Specify the constraint for the squared sum of the incoming weights per unit (e.g., for Rectifier). The default value is infinity.

Initial_weight_distribution: (DL) Select the initial weight distribution (Uniform Adaptive, Uniform, or Normal). The default is Uniform Adaptive. If Uniform Adaptive is used, the initial_weight_scale parameter is not applicable.

Initial_weight_scale: (DL) Specify the initial weight scale of the distribution function for Uniform or Normal distributions. For Uniform, the values are drawn uniformly from initial weight scale. For Normal, the values are drawn from a Normal distribution with the standard deviation of the initial weight scale. The default value is 1.0. If Uniform Adaptive is selected as the initial_weight_distribution, the initial_weight_scale parameter is not applicable.

Classification_stop: (DL) (Applicable to discrete/categorical datasets only) Specify the stopping criterion for classification error fractions on training data. To disable this option, enter -1. The default value is 0.0.

Max_hit_ratio_k: (DL,)GLM (Classification only) Specify the maximum number (top K) of predictions to use for hit ratio computation (for multi-class only). To disable this option, enter 0. The default value is 10.

Regression_stop: (DL) (Applicable to real value/continuous datasets only) Specify the stopping criterion for regression error (MSE) on the training data. To disable this option, enter -1. The default value is 0.000001.

Diagnostics: (DL) Check this checkbox to compute the variable importances for input features (using the Gedeon method). For large networks, selecting this option can reduce speed. This option is selected by default.

Fast_mode: (DL) Check this checkbox to enable fast mode, a minor approximation in back-propagation. This option is selected by default.

Ignore_const_cols: Check this checkbox to ignore constant training columns, since no information can be gained from them. This option is selected by default.

Force_load_balance: (DL) Check this checkbox to force extra load balancing to increase training speed for small datasets and use all cores. This option is selected by default.

Single_node_mode: (DL) Check this checkbox to force H2O to run on a single node for fine-tuning of model parameters. This option is not selected by default.

Replicate_training_data: (DL) Check this checkbox to replicate the entire training dataset on every node for faster training on small datasets. This option is not selected by default. This option is only applicable for clouds with more than one node.

Shuffle_training_data: (DL) Check this checkbox to shuffle the training data. This option is recommended if the training data is replicated and the value of train_samples_per_iteration is close to the number of nodes times the number of rows. This option is not selected by default.

Missing_values_handling: (DL) Select how to handle missing values (Skip or MeanImputation). The default value is MeanImputation.

Quiet_mode: (DL) Check this checkbox to display less output in the standard output. This option is not selected by default.

Sparse: (DL) Check this checkbox to use sparse iterators for the input layer. This option is not selected by default as it rarely improves performance.

Col_major: (DL) Check this checkbox to use a column major weight matrix for the input layer. This option can speed up forward propagation but may reduce the speed of backpropagation. This option is not selected by default.

Average_activation: (DL) Specify the average activation for the sparse autoencoder. The default value is 0. If Rectifier is selected as the Activation type, this value must be positive. For Tanh, the value must be in (-1,1).

Sparsity_beta: (DL) Specify the sparsity regularization. The default value is 0.

Max_categorical_features: (DL) Specify the maximum number of categorical features enforced via hashing. The default is unlimited.

Reproducible: (DL) To force reproducibility on small data, check this checkbox. If this option is enabled, the model takes more time to generate, since it uses only one thread.

Export_weights_and_biases: (DL) To export the neural network weights and biases as H2O frames, check this checkbox.

Class_sampling_factors: (GLM, DRF, Naïve Bayes), GBM, DL) Specify the per-class (in lexicographical order) over/under-sampling ratios. By default, these ratios are automatically computed during training to obtain the class balance. There is no default value. This option is only applicable for classification problems and when Balance_Classes is enabled.

Seed: (K-Means, GBM, DL, DRF) Specify the random number generator (RNG) seed for algorithm components dependent on randomization. The seed is consistent for each H2O instance so that you can create models with the same starting conditions in alternative configurations.

Prior: (GLM) Specify prior probability for y ==1. Use this parameter for logistic regression if the data has been sampled and the mean of response does not reflect reality. The default value is -1.

Max_active_predictors: (GLM) Specify the maximum number of active predictors during computation. This value is used as a stopping criterium to prevent expensive model building with many predictors.

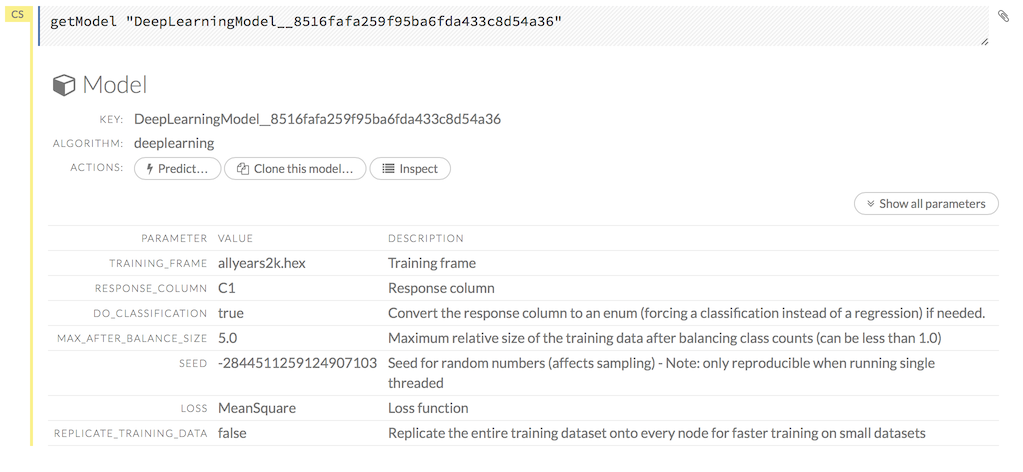

Viewing Models

Click the Assist Me! button, then click the getModels link, or enter getModels in the cell in CS mode and press Ctrl+Enter. A list of available models displays.

To view all current models, you can also click the Model menu and click List All Models.

To inspect a model, check its checkbox then click the Inspect button, or click the Inspect button to the right of the model name.

A summary of the model’s parameters displays. To display more details, click the Show All Parameters button.

NOTE: The Clone this model… button will be supported in a future version.

To delete a model, click the Delete button.

To generate a POJO to be able to use the model outside of H2O, click the Preview POJO button.

To learn how to make predictions, continue to the next section.

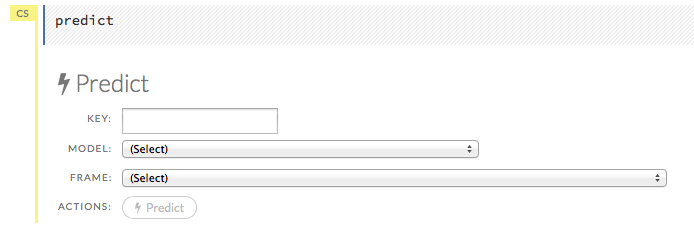

… Making Predictions

After creating your model, click the key link for the model, then click the Predict button. Select the model to use in the prediction from the drop-down Model: menu and the data frame to use in the prediction from the drop-down Frame menu, then click the Predict button.

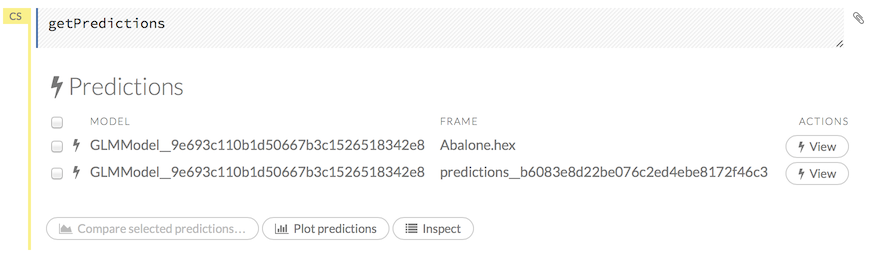

Viewing Predictions

Click the Assist Me! button, then click the getPredictions link, or enter getPredictions in the cell in CS mode and press Ctrl+Enter. A list of the stored predictions displays.

To view a prediction, click the View button to the right of the model name.

You can also view predictions by clicking the drop-down Score menu and selecting List All Predictions.

Viewing Frames

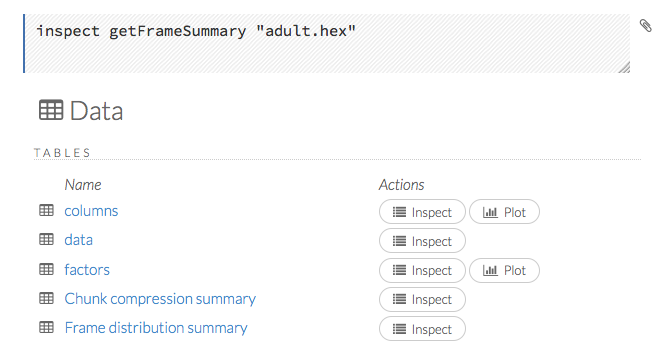

To view a specific frame, click the “Key” link for the specified frame, or enter getFrameSummary "FrameName" in a cell in CS mode (where FrameName is the name of a frame, such as allyears2k.hex.

From the getFrameSummary cell, you can:

- view a truncated list of the rows in the data frame by clicking the View Data button

- split the dataset by clicking the Split… button

- view the columns, data, and factors in more detail or plot a graph by clicking the Inspect button

- create a model by clicking the Build Model button

- make a prediction based on the data by clicking the Predict button

- download the data as a .csv file by clicking the Download button

- view the characteristics or domain of a specific column by clicking the Summary link

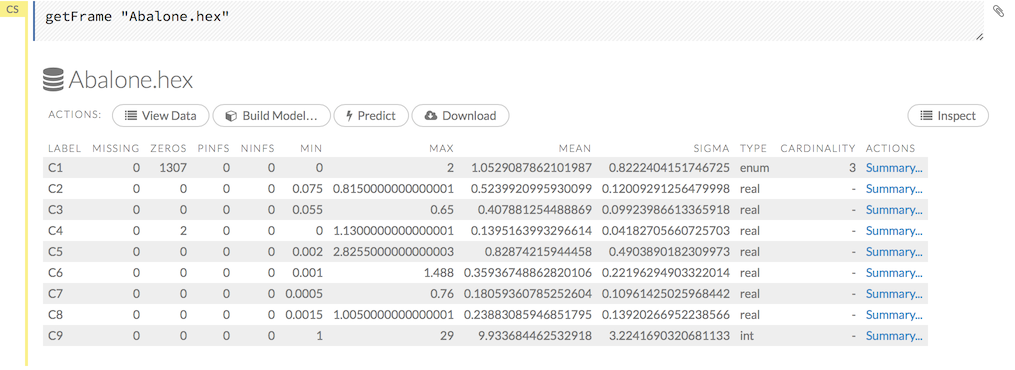

When you view a frame, you can “drill-down” to the necessary level of detail (such as a specific column or row) using the Inspect button or by clicking the links. The following screenshot displays the results of clicking the Inspect button for a frame.

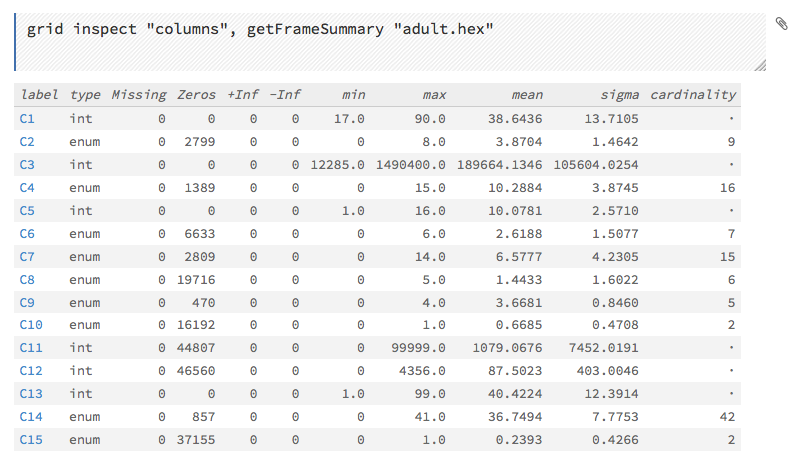

This screenshot displays the results of clicking the columns link.

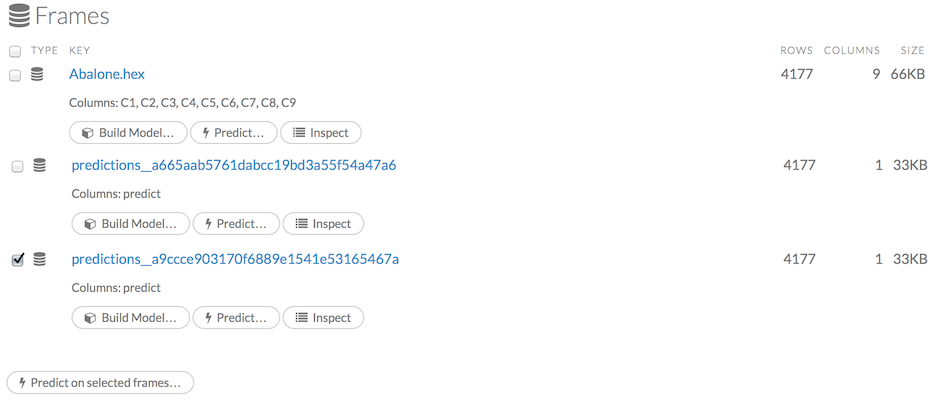

To view all frames, click the Assist Me! button, then click the getFrames link, or enter getFrames in the cell in CS mode and press Ctrl+Enter. You can also view all current frames by clicking the drop-down Data menu and selecting List All Frames.

A list of the current frames in H2O displays that includes the following information for each frame:

- Link to the frame (the “key”)

- Number of rows and columns

- Size

For parsed data, the following information displays:

- Link to the .hex file

The Build Model, Predict, and Inspect buttons

To make a prediction, check the checkboxes for the frames you want to use to make the prediction, then click the Predict on Selected Frames button.

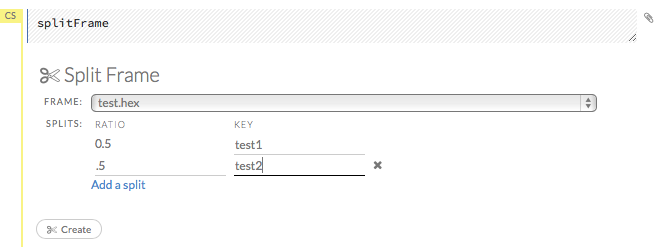

Splitting Frames

Datasets can be split within Flow for use in model training and testing.

- To split a frame, click the Assist Me button, then click splitFrame. Note: You can also click the drop-down Data menu and select Split Frame….

- From the drop-down Frame: list, select the frame to split.

In the second Ratio entry field, specify the fractional value to determine the split. The first Ratio field is automatically calculated based on the values entered in the second Ratio field.

Note: Only fractional values between 0 and 1 are supported (for example, enter

.5to split the frame in half). The total sum of the ratio values must equal one. H2O automatically adjusts the ratio values to equal one; if unsupported values are entered, an error displays.- In the Key entry field, specify a name for the new frame.

- (Optional) To add another split, click the Add a split link. To remove a split, click the

Xto the right of the Key entry field. - Click the Create button.

Creating Frames

To create a frame with a large amount of random data (for example, to use for testing), click the drop-down Admin menu, then select Create Synthetic Frame. Customize the frame as needed, then click the Create button to create the frame.

Plotting Frames

To create a plot from a frame, click the Inspect button, then click the Plot button.

Select the type of plot (point, path, or rect) from the drop-down Type menu, then select the x-axis and y-axis from the following options:

- label

- type

- missing

- zeros

- +Inf

- -Inf

- min

- max

- mean

- sigma

- cardinality

Select one of the above options from the drop-down Color menu to display the specified data in color, then click the Plot button to plot the data.

Note: Because H2O stores enums internally as numeric then maps the integers to an array of strings, any min, max, or mean values for categorical columns are not meaningful and should be ignored. Displays for categorical data will be modified in a future version of H2O.

… Using Flows

You can use and modify flows in a variety of ways:

- Clips allow you to save single cells

- Outlines display summaries of your workflow

- Flows can be saved, duplicated, loaded, or downloaded

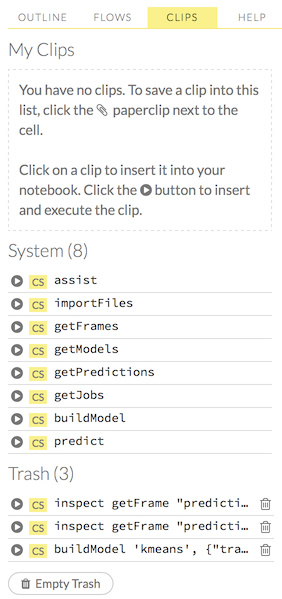

Using Clips

Clips enable you to save cells containing your workflow for later reuse. To save a cell as a clip, click the paperclip icon to the right of the cell (highlighted in the red box in the following screenshot).

To use a clip in a workflow, click the “Clips” tab in the sidebar on the right.

All saved clips, including the default system clips (such as assist, importFiles, and predict), are listed. Clips you have created are listed under the “My Clips” heading. To select a clip to insert, click the circular button to the left of the clip name. To delete a clip, click the trashcan icon to right of the clip name.

NOTE: The default clips listed under “System” cannot be deleted.

Deleted clips are stored in the trash. To permanently delete all clips in the trash, click the Empty Trash button.

NOTE: Saved data, including flows and clips, are persistent as long as the same IP address is used for the cluster. If a new IP is used, previously saved flows and clips are not available.

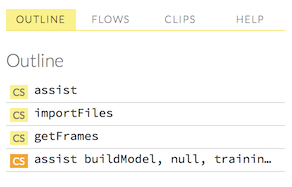

Viewing Outlines

The “Outline” tab in the sidebar displays a brief summary of the cells currently used in your flow; essentially, a command history.

- To jump to a specific cell, click the cell description.

To delete a cell, select it and press the X key on your keyboard.

Saving Flows

- Finding Saved Flows on your Disk

- Saving Flows on a Hadoop cluster

- Copying Flows

- Downloading Flows

- Loading Flows

You can save your flow for later reuse. To save your flow as a notebook, click the “Save” button (the first button in the row of buttons below the flow name), or click the drop-down “Flow” menu and select “Save.” To enter a custom name for the flow, click the default flow name (“Untitled Flow”) and type the desired flow name. A pencil icon indicates where to enter the desired name.

To confirm the name, click the checkmark to the right of the name field.

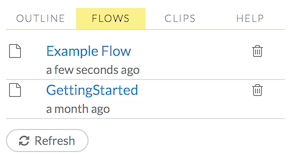

To reuse a saved flow, click the “Flows” tab in the sidebar, then click the flow name. To delete a saved flow, click the trashcan icon to the right of the flow name.

Finding Saved Flows on your Disk

By default, flows are saved to the h2oflows directory underneath your home directory. The directory where flows are saved is printed to stdout:

03-20 14:54:20.945 172.16.2.39:54323 95667 main INFO: Flow dir: '/Users/<UserName>/h2oflows'

To back up saved flows, copy this directory to your preferred backup location.

To specify a different location for saved flows, use the command-line argument -flow_dir when launching H2O:

java -jar h2o.jar -flow_dir /<New>/<Location>/<For>/<Saved>/<Flows>